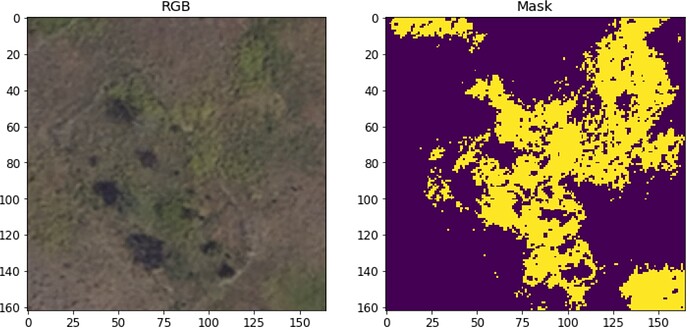

Greetings, I have a (binary) segmentation project in which I am trying to detect a problem weed in a prairie ecosystem from aerial imagery. The data are ~15cm resolution and 3 channel. Here is an example of imagery and mask:

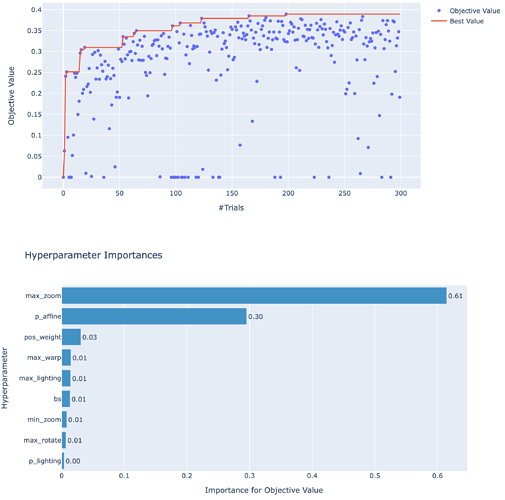

As part of my exploratory process of the data I am using applying Bayesian optimization with Optuna module to identify augmentations that maximize the Dice coefficient. What I am finding is that max zoom is by far the most important transform:

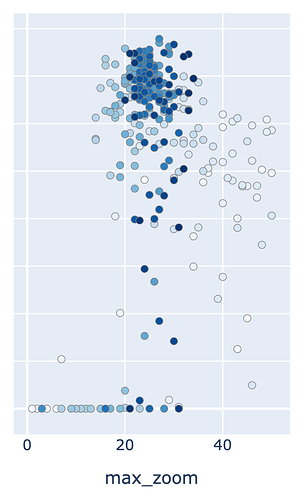

The optimal zoom is quite high (23x, y-axis=Dice):

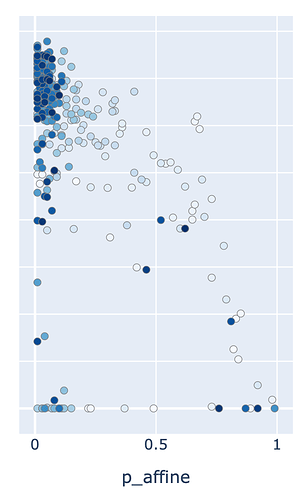

Oddly, the optimal p_affine is low (~0.05), however. Which suggests to me that other detrimental transforms, eg warping, are driving optimal p_affine value down.

Question 1 Are there any fastai notebooks demonstrating how to isolate transformation probabilities for each transform type? In other words, how would I set warping and zoom probabilities separately?

Question 2 Given that max zoom is of such great importance, I would expect that I would benefit greatly from applying such transforms at test time (TTA). However, I having trouble imaging what the expected behavior would be for zoom transforms. In the case of a flip, you would flip mask and image, segment, and then unflip the prediction for averaging probabilities across the n transforms. But in the case of zooming, only a fraction of the image is segmented. How would you ‘unzoom’ and how would probabilities be weighted for those pixel outside of the zoomed area?