I’m on a segmentation task for cells. I have a binary mask, yes its values are [0,1] (I dunno why fastai can’t figure this out itself and optionally convert it to the correct range?). Now the training works. When doing:

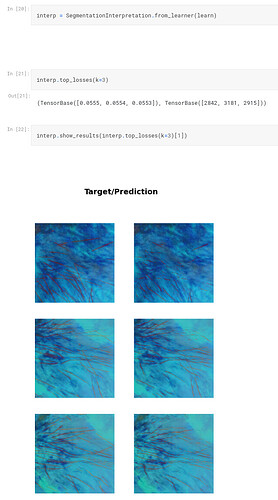

interp = SegmentationInterpretation.from_learner(unet)

interp.plot_top_losses(k=3)

I get the following error:

TypeError Traceback (most recent call last)

<ipython-input-166-58787b72d556> in <cell line: 2>()

1 interp = SegmentationInterpretation.from_learner(unet)

----> 2 interp.plot_top_losses(k=3)

7 frames

/usr/local/lib/python3.10/dist-packages/fastai/interpret.py in plot_top_losses(self, k, largest, **kwargs)

79 x1, y1, outs = self.dl._pre_show_batch(inps+decoded, max_n=len(idx))

80 if its is not None:

---> 81 plot_top_losses(x, y, its, outs.itemgot(slice(len(inps), None)), preds, losses, **kwargs)

82 #TODO: figure out if this is needed

83 #its None means that a batch knows how to show itself as a whole, so we pass x, x1

/usr/local/lib/python3.10/dist-packages/fastcore/dispatch.py in __call__(self, *args, **kwargs)

118 elif self.inst is not None: f = MethodType(f, self.inst)

119 elif self.owner is not None: f = MethodType(f, self.owner)

--> 120 return f(*args, **kwargs)

121

122 def __get__(self, inst, owner):

/usr/local/lib/python3.10/dist-packages/fastai/vision/learner.py in plot_top_losses(x, y, samples, outs, raws, losses, nrows, ncols, figsize, **kwargs)

355 imgs = (s[0], s[1], o[0])

356 for ax,im,title in zip(axs, imgs, titles):

--> 357 if title=="pred": title += f"; loss = {l:.4f}"

358 im.show(ctx=ax, **kwargs)

359 ax.set_title(title)

/usr/local/lib/python3.10/dist-packages/torch/_tensor.py in __format__(self, format_spec)

868 def __format__(self, format_spec):

869 if has_torch_function_unary(self):

--> 870 return handle_torch_function(Tensor.__format__, (self,), self, format_spec)

871 if self.dim() == 0 and not self.is_meta and type(self) is Tensor:

872 return self.item().__format__(format_spec)

/usr/local/lib/python3.10/dist-packages/torch/overrides.py in handle_torch_function(public_api, relevant_args, *args, **kwargs)

1549 # Use `public_api` instead of `implementation` so __torch_function__

1550 # implementations can do equality/identity comparisons.

-> 1551 result = torch_func_method(public_api, types, args, kwargs)

1552

1553 if result is not NotImplemented:

/usr/local/lib/python3.10/dist-packages/fastai/torch_core.py in __torch_function__(cls, func, types, args, kwargs)

380 if cls.debug and func.__name__ not in ('__str__','__repr__'): print(func, types, args, kwargs)

381 if _torch_handled(args, cls._opt, func): types = (torch.Tensor,)

--> 382 res = super().__torch_function__(func, types, args, ifnone(kwargs, {}))

383 dict_objs = _find_args(args) if args else _find_args(list(kwargs.values()))

384 if issubclass(type(res),TensorBase) and dict_objs: res.set_meta(dict_objs[0],as_copy=True)

/usr/local/lib/python3.10/dist-packages/torch/_tensor.py in __torch_function__(cls, func, types, args, kwargs)

1293

1294 with _C.DisableTorchFunctionSubclass():

-> 1295 ret = func(*args, **kwargs)

1296 if func in get_default_nowrap_functions():

1297 return ret

/usr/local/lib/python3.10/dist-packages/torch/_tensor.py in __format__(self, format_spec)

871 if self.dim() == 0 and not self.is_meta and type(self) is Tensor:

872 return self.item().__format__(format_spec)

--> 873 return object.__format__(self, format_spec)

874

875 @_handle_torch_function_and_wrap_type_error_to_not_implemented

TypeError: unsupported format string passed to TensorBase.__format__

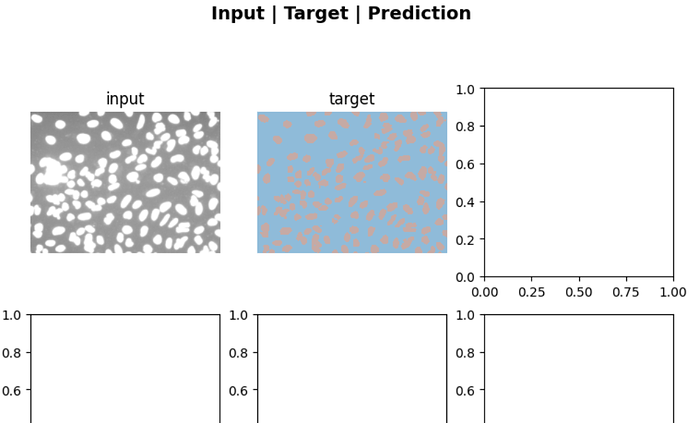

and the image looks like this:

Afaik when doing learner.predict(), I get the input, target and prediction. However for the latter I get an array of shape (2, H, W). Is that arrays of confidence values for the two classes?

What should I do about the error? What do I need to do such that the third image looks correct, i.e. the prediction?