Before making big changes to my NN graph or hyper params, I wanted to seek the crowd’s wisdom and insight.

Without revealing too much. Assume the dataset is a bunch of images. The NN graph is a series of basic CNNs blocks. The goal is to classify images.

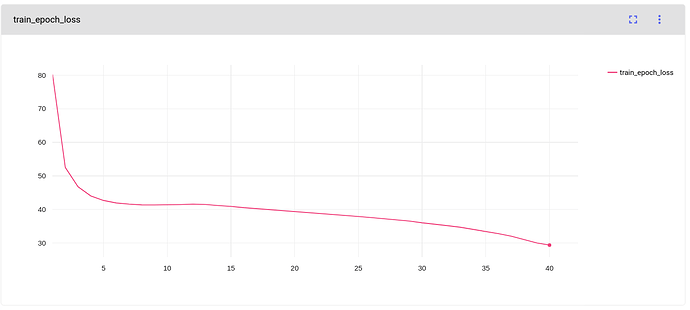

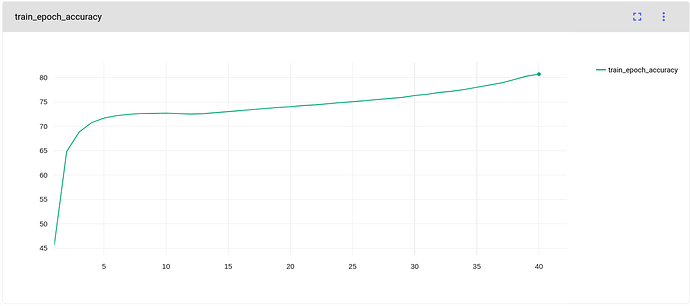

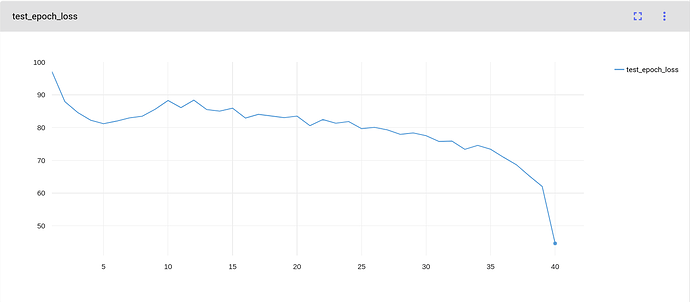

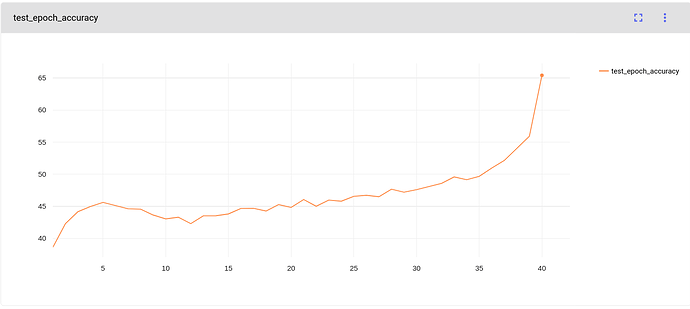

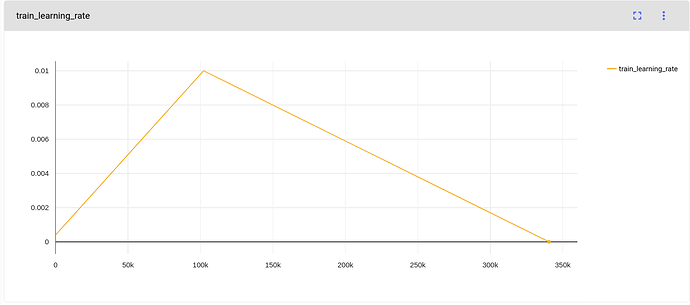

I am using PyTorch to train the model. For learning scheduler I am using OneCycleLR with anneal_strategy=linear as you can see in the learning rate graph. max_lr is picked arbitrarily at 0.01.

My previous training experiments with a smaller dataset size (5x fewer) and better quality had that classical looking graphs for losses and accuracy. But this last experiment as you are seeing have odd S shaped graphs for test losses and accuracy. Specifically towards the end of the training, the test accuracy jumps up and losses are down dramatically. Is there a telltale sign for this training behavior? What’s your top recommendation on hyper-param to try to tweak? I was thinking to increase the training epocs from 40 to 60 but this would take 2 days to re-train. Perhaps, max_lr should be changed.