I think two suboptimal metrics is better than zero metrics. ![]() Often it’s best to just use your judgement, rather than come up with some perfect algorithm…

Often it’s best to just use your judgement, rather than come up with some perfect algorithm…

Thanks for your pointers Jeremy. I’ll try adding them soon!

@jeremy You mentioned AWS gave fast.ai $250k of compute credits a while ago. We’d need to check it’s ok with Amazon, but we could totally make Salamander 100% free for students & international fellows if you’d like - I’d create a form accessible only to you, which would let you give users free access to Salamander via fast.ai’s compute credits. What do you think?

@binga I added pricing to the providers & linked datasheets to the GPUs. What do you think?

PR here:

We gave out those credits to the last class - but if we can get more credits, that would be very helpful!

I gave Salamander a try, thanks for setting it up! Here are my thoughts so far.

The good:

- The web interface is super intuitive and it is really easy to get started.

- The price seems competitive.

- I really like how it keeps track of the $$ you spent. Very transparent!

- Minor benefit: the $1 credit lets you try out the system without committing.

The bad:

- After I set up my machine, I couldn’t start it for a few hours. I forgot the exact message, but it said to tray again in 10 minutes (for quite a while). Maybe the demand at the particular time was high?

- I couldn’t upload my preexisting ssh key. When I clicked on the upload button (on this page:https://salamander.ai/setup-access-keys), nothing happened. I used Ubuntu 16.04, firefox version 62.0.

Things I don’t understand (I’m probably missing something simple, please let me know):

- I couldn’t import fastai from a different folder. I tried to modify the .bashrc file adding fastai (using this guide), but that didn’t help. I also tried sys.path.append at the front of my jupiter notebook, pointing to the fastai folder, but that didn’t help either. What am I missing?

@Krisztian Thanks for taking a look and saying all those nice things

There’s been a k80 supply deficit during the last week, the (simplified) algorithm for requesting a server goes:

1. request a server

2. check aws for the request status every 2 seconds

3. give up if the status code indicates capacity issues

4. give up if 15 seconds elapse & aws hasn't agreed to fulfill the request

5. wait 60 seconds for the server to start running, give up & cancel the request if it takes longer

6. lock the server the user tried to start for a random interval of between 1 to 20 minutes

Whilst AWS will often manage to provision a K80 given longer, they often shut down prematurely when you do. I’m still experimenting with the precise algorithm & talking with AWS support. Within the next few weeks I’ll start estimating availability from the rate of failed requests (AWS doesn’t provide that information) and make sure deficits are displayed before you try to launch any servers instead of just trying it over and over. v100 GPUs have been totally fine, very soon you’ll be able to resize storage & change hardware after launching a server which will make it easier to avoid this issue. I think the best use-case for this is helping people switch to low-cost general purpose hardware when they just want to write code or review a notebook for example.

Right now Salamander only requests servers in the cheapest availability zone, I’m considering requesting servers in every availability zone within 10% of the lowest price and cancelling all requests except the first one that completed successfully. This should improve the K80 supply issues and instance startup time.

Did you select the “fastai” kernel after opening the notebook? By default it’s python 3. Within the next few days I’ll change that so any notebooks within the “~/fastai” directory use the “fastai” kernel by default.

I’ll take a look at uploading ssh keys today.

E: the ssh key issue was specific to Firefox and now resolved

@Krisztian fyi using sys to control how modules are imported works is much easier than putting your notebooks in a particular location or using environment variables. Put this at the top of each notebook:

%reload_ext autoreload

%autoreload 2

%matplotlib inline

import sys

sys.path.append('/home/ubuntu/fastai')

You’ll then be able to import fastai like this:

from fastai.imports import *

from fastai.torch_imports import *

from fastai.learner import *

Thanks, I fixed the path to ‘/hom/ubuntu/fastai’ and it all worked! (I did have to install some fastai dependencies first though)

My $10 credit purchase just got processed, and I noticed that I also had to pay a $1.75 tax.

That means that the cost comparison is misleading (Paperspace $0.51/hour is inclusive of tax I believe). Including tax, the total cost of the K80 machine is $0.423 (not $0.36) per hour.

That’s still cheaper than Paperspace, but it would be nice to have clarity in terms of pricing.

@Krisztian thanks for bringing up the tax. I thought Salamander was charging tax exactly the same way Paperspace & AWS were, but on further investigation I’ve discovered they only hide VAT from European customers like me (link). Right now I’d like to focus entirely on things like service reliability and design issues, but will start to address the legal paperwork (presumably?) needed to charge international customers less tax in a few weeks time.

What is the best way to upload large datasets? Is there a way to get the Kaggle API working through Salamander?

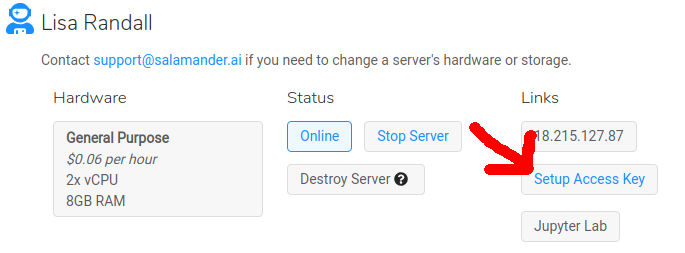

@KarlH After launching your first server, click “Setup Access Key”. Follow the steps and you’ll soon be able to connect via SSH from your terminal.

Once connected, I recommend installing the official Kaggle API: https://github.com/Kaggle/kaggle-api

@ashtonsix I had some issues updating fastai from in a new server. Doesn’t look like I can attach files here so I sent you an email with a text file of the terminal session and the environment file used for updating.

The basic gist is:

I set up a new server. I cd to fastai/ and do a git pull, and when I try to update my fastai environment with the environment.yml file: after running through a bunch of already-installed packages, an error occurs triggering 3 exceptions, finally ending in what looks like a typo in some python utility package:

File "/home/ubuntu/anaconda3/envs/fastai/lib/python3.6/site-packages/pip/_vendor/pkg_resources/__init__.py", line 2951, in __init__

raise RequirementParseError(str(e))

pip._vendor.pkg_resources.RequirementParseError: Invalid requirement, parse error at "'; extra '"

I noticed I can install the kaggle api (pip install kaggle not kaggle-cli) before updating the fastai environment, but if I attempt to install it after, I will get the same (or similar) error as above (should be about 58-lines).

I tried updating pip (though looks like it’s already up to date within the fastai environment), conda, uninstalling anaconda and replacing with miniconda, reinstalling python site-package tools (I forget the exact name), deleting everything inside .cache/[?pip/]https & .cache/[?pip/][?site-]packages, and a few other things to no effect.

I figure this is a bit of an issue if it’s happening on a fresh machine, so sending this your way. I sent the email to your “Salamander”-named address with the line: “conda/pip installs failing during & after fastai update on 1x K80 machine”.

@Borz It looks like you might have introduced an error into environment.yml while editing it.

I recommend totally removing fastai & reinstalling it to get the latest version:

cd ~

yes | jupyter kernelspec remove fastai

conda env remove -y -n fastai

rm -rf fastai

git clone --depth 1 https://github.com/fastai/fastai.git

cd fastai

conda env update

source activate fastai

python -m ipykernel install --user --name fastai --display-name "fastai"

source deactivate fastai

cd ~

When you update fastai in the future try running git pull && conda env update instead of editing environment.yml

fyi I’m updating Salamander’s AMI images today to use the newest fastai library: https://github.com/fastai/fastai_pytorch

Cool.

If I remember correctly, in my second test I only git-pulled then updated, without touching the environment file. In the first instance in the environment file, the line - pytorch<0.4 was changed to - pytorch>=0.4.

As an update:

In a new machine I tried without checking the box for pre-installed fastai environment. (after a git clone of fastai) During the execution phase of creating the environment via conda env create -f environment.yml, the same error occured.

So then I ran the commands in your email to double-check. In the execution phase while running conda env udpate, the same error occured.

Further update:

wow, this happened on the trusty old fastai-part1v2-p2 (ami-c6ac1cbc) AWS AMI instance… so it’s not a salamander issue, something’s up with the packages.

I replicated this problem on my linux laptop (commenting out cuda90 and cudnn to save time, and pytorch and torchvision because the older gpu is no longer supported).

The culprit seems to be jupyter_contrib_nbextensions.

I began by commenting out everything in environment.yml except for line 38:

- conda-forge::jupyter_contrib_nbextensions

a fastai environment consisting only of that package installed, but with some strange (all-OK) messages in the execution phase.

I then uncommented everything (except for 4 packages listed at top) and ran conda env update. The result was the exact same error.

Oddly enough, when I then try to delete the environment, I get an error and 5 warnings, the error being:

+ /home/wnixalo/miniconda3/envs/fastai/bin/jupyter-contrib-nbextension uninstall --sys-prefix

...

...

File "/home/wnixalo/miniconda3/envs/fastai/lib/python3.6/site-packages/notebook/nbextensions.py", line 28, in <module>

from ipython_genutils.py3compat import string_types, cast_unicode_py2

ModuleNoteFoundError: No module named 'ipython_genutils.py3compat'

and the last warning:

WARNING conda.core.link:run_script(516): pre-unlink script failed for package conda-forge::widgetsnbextension-3.4.2-py36_0

consider notifying the package maintainer

(rewritten by hand if there’re any typos)

I’m going to see what happens if fastai is installed without jupyter&all, with jupyter&all installed manually afterwards. I’m surprised I haven’t seen this anywhere: I replicated this on 3 seperate machines, and the environment file is over a month old now.

Update:

It works.

- From an updated fastai repo, in

environment.ymlcomment out lines 34-38 (jupyter,jupyter_client,jupyter_console,jupyter_core,conda-forge::jupyter_contrib_nbextensions). - run

conda env update(not having any environment activated, and having no pre-existing fastai environment).

2a. I also conda/pip installed any environments that weren’t installed due to some conflict (mkl-random needs cython; spacy; twisted) - One by one

conda install: jupyter, _client, and _core; the last two’ll probably already be installed by jupyter. -

conda install -c conda-forge jupyter_contrib_nbextensions(yes to all)

And it should work. I haven’t tested it during runtime yet. If the above works on a cloud machine I’ll update.

Update2:

It works on salamander’s AWS spot instance.

@Borz So you tried to update fastai, but couldn’t because the owners of jupyter_contrib_nbextensions accidentally unpublished their package but you found a workaround. Is that right? [github]

Looks like everything is resolved to me

Hi,

Thanks I tried your approach it didn’t work for me. I have couple of question, what did you mean by " not having any environment activated, and having no pre-existing fastai environment in step 2?

I ran coda env update still I get the same issue.

I also conda cython , conda spacy, conda twisted, and conda mkl-random

I also did step 3 and 4.

After these I can’t open Jupyter notebook yet

and conda env update has the same issue.

I had similar errors. But it is too much to delete the complete setup and bringing it back.

Here is a solution which I found.

Everything is back to normal.