Hello! I am encountering some troubles with lr_find(), and what I was trying to do is to perform stock prediction. I don’t understand exactly what happened, so here is my setup:

Here are are the Gramian Angular Field Function, which will be used to generate the data, from a window captured as a Numpy array. The data generated will be floats between -1 and 1.

class GAF():

def __init__(self, inp, opt, min_sample=-19, max_sample=19):

self.inp = inp

self.opt = opt

self.min_sample = min_sample

self.max_sample = max_sample

self.out = self.forward(inp, opt, min_sample, max_sample)

def forward(self, i, option, min_s, max_s):

if min_s!=-19 and max_s!=19:

imax, imin = min_s, max_s

else:

imax, imin = i.max(), i.min()

i_rescaled = ((i-imax)+(i-imin))/(imax-imin)

i2 = np.arccos(i_rescaled)

if option == 'summation':

field = np.cos(i2[None, :] + i2[:, None])

if option == 'difference':

field = np.sin(-i2[None, :] + i2[:, None])

return field

def pixelArray(inp, norm_dataset = False, multiplier = 1):

assert len(inp.shape) == 2

out = np.zeros((inp.shape[0]*multiplier*2, inp.shape[0]*multiplier*2,3))

if norm_dataset:

norm_min, norm_max = -1, 1

else:

norm_min, norm_max = -19,19

out[:inp.shape[0]*multiplier,:,0] = np.tile(GAF(inp[:,0], 'summation', norm_min, norm_max).out, [multiplier, multiplier*2])

out[inp.shape[0]*multiplier:,:,0] = np.tile(GAF(inp[:,1], 'summation', norm_min, norm_max).out, [multiplier, multiplier*2])

out[:inp.shape[0]*multiplier,:,1] = np.tile(GAF(inp[:,3], 'summation', norm_min, norm_max).out, [multiplier, multiplier*2])

out[inp.shape[0]*multiplier:,:,1] = np.tile(GAF(inp[:,2], 'summation', norm_min, norm_max).out, [multiplier, multiplier*2])

out[:inp.shape[0]*multiplier,:,2] = np.tile(GAF(inp[:,4], 'summation', norm_min, norm_max).out, [multiplier, multiplier*2])

out[inp.shape[0]*multiplier:,:,2] = np.tile(GAF(inp[:,5], 'summation', norm_min, norm_max).out, [multiplier, multiplier*2])

return out

Here are some downloaded stock data - showing the first 56 entries of the values of AAPL. This is what a window looks like. The numpy array is formatted into dataframe for easier view.

aaa = stock_arrays['AAPL'][:56]

pd.DataFrame(aaa)

| 0 | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | |

|---|---|---|---|---|---|---|---|---|---|

| 0 | -0.873644 | -0.870444 | -0.874939 | -0.872358 | 0.144047 | 0.266938 | 1998.0 | 1.0 | 23.0 |

| 1 | -0.873000 | -0.871718 | -0.879537 | -0.873000 | 0.120792 | 0.266618 | 1998.0 | 1.0 | 26.0 |

| 2 | -0.875589 | -0.870444 | -0.877553 | -0.876242 | 0.107491 | 0.264902 | 1998.0 | 1.0 | 27.0 |

| 3 | -0.875589 | -0.873644 | -0.881540 | -0.875589 | 0.122365 | 0.265015 | 1998.0 | 1.0 | 28.0 |

| 4 | -0.878212 | -0.876242 | -0.882887 | -0.882887 | 0.139262 | 0.260671 | 1998.0 | 1.0 | 29.0 |

| 5 | -0.884924 | -0.878873 | -0.885608 | -0.884924 | 0.125978 | 0.259405 | 1998.0 | 1.0 | 30.0 |

| 6 | -0.882887 | -0.882887 | -0.895434 | -0.891869 | 0.194279 | 0.254894 | 1998.0 | 2.0 | 2.0 |

| 7 | -0.891869 | -0.881540 | -0.891869 | -0.884924 | 0.171360 | 0.256452 | 1998.0 | 2.0 | 3.0 |

| 8 | -0.887673 | -0.882887 | -0.888367 | -0.885608 | 0.128308 | 0.255934 | 1998.0 | 2.0 | 4.0 |

| 9 | -0.885608 | -0.882887 | -0.888367 | -0.884924 | 0.145131 | 0.256114 | 1998.0 | 2.0 | 5.0 |

| 10 | -0.884243 | -0.880870 | -0.885608 | -0.882887 | 0.136958 | 0.256693 | 1998.0 | 2.0 | 6.0 |

| 11 | -0.884243 | -0.872358 | -0.884243 | -0.875589 | 0.181655 | 0.258951 | 1998.0 | 2.0 | 9.0 |

| 12 | -0.876242 | -0.871718 | -0.876896 | -0.873000 | 0.173713 | 0.259824 | 1998.0 | 2.0 | 10.0 |

| 13 | -0.872358 | -0.872358 | -0.878873 | -0.877553 | 0.139212 | 0.254673 | 1998.0 | 2.0 | 11.0 |

| 14 | -0.876242 | -0.873000 | -0.876896 | -0.873644 | 0.137306 | 0.256199 | 1998.0 | 2.0 | 12.0 |

| 15 | -0.875589 | -0.868548 | -0.877553 | -0.872358 | 0.138337 | 0.256742 | 1998.0 | 2.0 | 13.0 |

| 16 | -0.872358 | -0.869810 | -0.872358 | -0.871080 | 0.131867 | 0.257324 | 1998.0 | 2.0 | 17.0 |

| 17 | -0.871718 | -0.859932 | -0.871718 | -0.861747 | 0.181647 | 0.261926 | 1998.0 | 2.0 | 18.0 |

| 18 | -0.858730 | -0.858133 | -0.867294 | -0.862967 | 0.170992 | 0.259857 | 1998.0 | 2.0 | 19.0 |

| 19 | -0.862356 | -0.861747 | -0.869178 | -0.867294 | 0.160716 | 0.252158 | 1998.0 | 2.0 | 20.0 |

| 20 | -0.866048 | -0.851671 | -0.867294 | -0.855169 | 0.179888 | 0.259651 | 1998.0 | 2.0 | 23.0 |

| 21 | -0.854582 | -0.853996 | -0.859932 | -0.854582 | 0.177650 | 0.260042 | 1998.0 | 2.0 | 24.0 |

| 22 | -0.854582 | -0.841528 | -0.858133 | -0.845411 | 0.199912 | 0.266579 | 1998.0 | 2.0 | 25.0 |

| 23 | -0.845411 | -0.834510 | -0.849372 | -0.835041 | 0.190900 | 0.274437 | 1998.0 | 2.0 | 26.0 |

| 24 | -0.836643 | -0.831875 | -0.843183 | -0.833980 | 0.184114 | 0.275294 | 1998.0 | 2.0 | 27.0 |

| 25 | -0.834510 | -0.834510 | -0.845973 | -0.841528 | 0.171090 | 0.253694 | 1998.0 | 3.0 | 2.0 |

| 26 | -0.849372 | -0.837718 | -0.851671 | -0.838258 | 0.162029 | 0.256821 | 1998.0 | 3.0 | 3.0 |

| 27 | -0.840432 | -0.824676 | -0.840432 | -0.827217 | 0.206793 | 0.267926 | 1998.0 | 3.0 | 4.0 |

| 28 | -0.837180 | -0.828758 | -0.838258 | -0.830310 | 0.197206 | 0.257285 | 1998.0 | 3.0 | 5.0 |

| 29 | -0.831875 | -0.826706 | -0.836107 | -0.827217 | 0.196560 | 0.260854 | 1998.0 | 3.0 | 6.0 |

| 30 | -0.832924 | -0.828243 | -0.843738 | -0.841528 | 0.189173 | 0.210245 | 1998.0 | 3.0 | 9.0 |

| 31 | -0.839342 | -0.826706 | -0.839886 | -0.830310 | 0.199928 | 0.225536 | 1998.0 | 3.0 | 10.0 |

| 32 | -0.821668 | -0.813384 | -0.826197 | -0.813862 | 0.226558 | 0.248039 | 1998.0 | 3.0 | 11.0 |

| 33 | -0.813862 | -0.807274 | -0.818216 | -0.807274 | 0.202087 | 0.257136 | 1998.0 | 3.0 | 12.0 |

| 34 | -0.805430 | -0.805430 | -0.812908 | -0.806350 | 0.188405 | 0.258478 | 1998.0 | 3.0 | 13.0 |

| 35 | -0.806350 | -0.805430 | -0.813384 | -0.809602 | 0.171328 | 0.243007 | 1998.0 | 3.0 | 16.0 |

| 36 | -0.811012 | -0.809602 | -0.815785 | -0.812195 | 0.172300 | 0.230402 | 1998.0 | 3.0 | 17.0 |

| 37 | -0.814822 | -0.807737 | -0.814822 | -0.807737 | 0.152661 | 0.238650 | 1998.0 | 3.0 | 18.0 |

| 38 | -0.808202 | -0.807737 | -0.810541 | -0.809134 | 0.125238 | 0.231044 | 1998.0 | 3.0 | 19.0 |

| 39 | -0.809602 | -0.808202 | -0.814822 | -0.811958 | 0.140103 | 0.215182 | 1998.0 | 3.0 | 20.0 |

| 40 | -0.815303 | -0.812908 | -0.825688 | -0.813862 | 0.172843 | 0.204212 | 1998.0 | 3.0 | 23.0 |

| 41 | -0.811958 | -0.800000 | -0.812908 | -0.800000 | 0.197265 | 0.236817 | 1998.0 | 3.0 | 24.0 |

| 42 | -0.802697 | -0.801794 | -0.811958 | -0.806119 | 0.169430 | 0.199453 | 1998.0 | 3.0 | 25.0 |

| 43 | -0.809134 | -0.807274 | -0.811484 | -0.810541 | 0.137113 | 0.173567 | 1998.0 | 3.0 | 26.0 |

| 44 | -0.810071 | -0.804972 | -0.811958 | -0.807737 | 0.148641 | 0.181582 | 1998.0 | 3.0 | 27.0 |

| 45 | -0.809134 | -0.803604 | -0.809134 | -0.804059 | 0.147674 | 0.192426 | 1998.0 | 3.0 | 30.0 |

| 46 | -0.804059 | -0.801344 | -0.805430 | -0.803604 | 0.150804 | 0.193830 | 1998.0 | 3.0 | 31.0 |

| 47 | -0.804059 | -0.801344 | -0.806811 | -0.803604 | 0.132985 | 0.193830 | 1998.0 | 4.0 | 1.0 |

| 48 | -0.804972 | -0.804059 | -0.807737 | -0.804972 | 0.134933 | 0.182985 | 1998.0 | 4.0 | 2.0 |

| 49 | -0.806350 | -0.805430 | -0.808667 | -0.806811 | 0.137138 | 0.167994 | 1998.0 | 4.0 | 3.0 |

| 50 | -0.807274 | -0.807274 | -0.813384 | -0.812908 | 0.164012 | 0.120337 | 1998.0 | 4.0 | 6.0 |

| 51 | -0.816269 | -0.814822 | -0.823668 | -0.818705 | 0.155418 | 0.079255 | 1998.0 | 4.0 | 7.0 |

| 52 | -0.820676 | -0.819688 | -0.825181 | -0.822666 | 0.142310 | 0.052964 | 1998.0 | 4.0 | 8.0 |

| 53 | -0.822166 | -0.815785 | -0.822666 | -0.817727 | 0.128341 | 0.078705 | 1998.0 | 4.0 | 9.0 |

| 54 | -0.817727 | -0.809602 | -0.822666 | -0.811484 | 0.154661 | 0.110133 | 1998.0 | 4.0 | 13.0 |

| 55 | -0.811958 | -0.805430 | -0.811958 | -0.807737 | 0.161088 | 0.128500 | 1998.0 | 4.0 | 14.0 |

Here is my DataLoader:

def lnsplit(s):

head = s.rstrip('0123456789')

tail = s[len(head):]

return head, tail

class StockLoader(Dataset):

def __init__(self, X, y=None):

super().__init__()

self.classes = [0,1]

self.c = 2

self.X = X

if y is not None: self.y = y

def __getitem__(self, i):

ticker, index = lnsplit(self.X[i])

index = int(index)

window = pixelArray(stock_arrays[ticker][index:index+56,:], multiplier = 2)

arr3D = np.transpose(window, (2, 0, 1))

new_tensor = torch.from_numpy(arr3D).float()

if stock_arrays[ticker][index+56, 3]>stock_arrays[ticker][index+56, 0]:

y = 1

else:

y = 0

return (new_tensor, y)

def __len__(self): return len(self.X)

What this intends to do is to receive a string (“AAPL0”), split it into ticker (‘AAPL’) and index (0), and the index to index+55 rows will be captured, then the index+56 day will be predicted whether the difference between the closing and opening price is larger than zero or not.

Then, I databunched the dataloader:

SL = StockLoader(train_list)

SLV = StockLoader(valid_list)

data = DataBunch.create(SL, SLV, bs=32)

learn = create_cnn(data, resnet50, metrics=error_rate)

learn.loss_func = F.cross_entropy

learn.freeze()

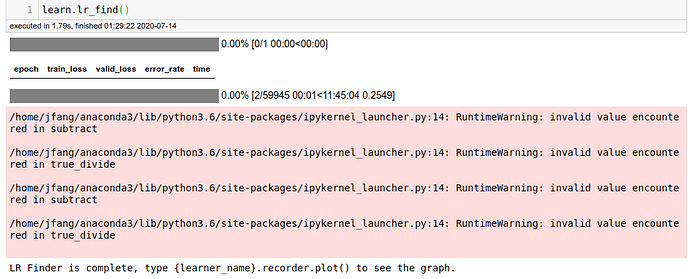

learn.lr_find()

And here are where the problems begin:

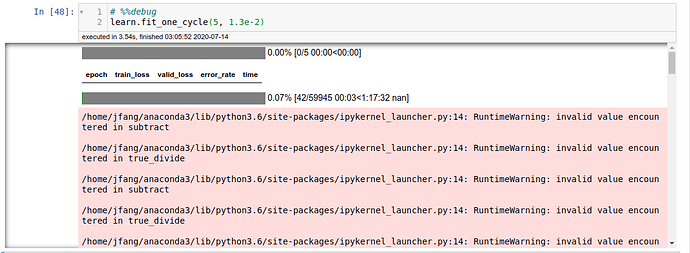

When I tried training, the error diverges.

Here are some of the warnings that I saw (incase the images weren’t large enough):

/home/jfang/anaconda3/lib/python3.6/site-packages/ipykernel_launcher.py:14: RuntimeWarning: invalid value encountered in subtract

/home/jfang/anaconda3/lib/python3.6/site-packages/ipykernel_launcher.py:14: RuntimeWarning: invalid value encountered in true_divide

/home/jfang/anaconda3/lib/python3.6/site-packages/ipykernel_launcher.py:14: RuntimeWarning: invalid value encountered in subtract

/home/jfang/anaconda3/lib/python3.6/site-packages/ipykernel_launcher.py:14: RuntimeWarning: invalid value encountered in true_divide

/home/jfang/anaconda3/lib/python3.6/site-packages/ipykernel_launcher.py:14: RuntimeWarning: invalid value encountered in subtract

/home/jfang/anaconda3/lib/python3.6/site-packages/ipykernel_launcher.py:14: RuntimeWarning: invalid value encountered in true_divide

/home/jfang/anaconda3/lib/python3.6/site-packages/ipykernel_launcher.py:14: RuntimeWarning: invalid value encountered in subtract

/home/jfang/anaconda3/lib/python3.6/site-packages/ipykernel_launcher.py:14: RuntimeWarning: invalid value encountered in true_divide

How could I get around this problem? Thanks!