When I run learn.lr_find() and plot learn.recorder.plot(), repeatedly, the graph is not consistent. Is it not supposed to be the same graph as long as I do not change any Learner parameters?

I’m getting the same problem and it’s driving me crazy. Sometimes the plot doesn’t even show up at all. If anyone has an idea why this happens please let us know.

I’ve had similar problems. Not sure about the inconsistent graph but the reason for it to not show up at all is likely that it is quickly diverging using the default starting learning rate of 1e-7 (start_lr). To check that this is the case, run it again and pass in the argument stop_div=False and post here what happens.

learn.lr_find(stop_div=False)

Thanks Robert, the

stop_div=False

line worked for me to get it to output plots!

No problem, if you’re ever wondering how to debug or alter a fastai function always go play around with the docs using doc(function_name), the docs are really good and it’s surprisingly accessible. I’m not a great or super experienced coder, and I can usually get what I want done.

I am having a similar problem that I only find it recently.

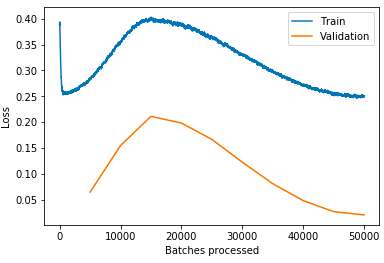

After I run learn.lr_find() and learn.recorder.plot(), then I run learn.fit_once_cyle(3).

However, the second epoch and the third epoch always have a higher loss and lower accuracy.

Sometimes it never comes back down, but if I don’t use lr_find(), it works fine.

Is it my learning rate is too high for the dataset or the regularisation is too heavy, I use wd=1e-1 and ps=0.5. Or because of mixed precision training?

I understand that lr_find() is a mock training, which resets the weight after plotting the graph. But I find that creating a new learner is always better than using the model directly after lr_find().

I am making an autoencoder, but this happens when I was training resnet too.