If you are using Windows Pro 10 or 11 with a recent Nvidia GPU (with the latest drivers installed) you don’t have to use a local installation of Ubuntu to develop with Fastai . When you have Docker Desktop and Microsoft Visual Code installed and running, you can use devcontainers from Visual Code.

Steps:

-

Create a project directory like

D:\Projects\Fastai22 -

Make a subdirectory

.devcontainer -

Put the files (content of those files at the end of this post)

devcontainer.jsonandDockerfilein this directory

-

Open the project directory in Microsoft Visual Code (VC)

-

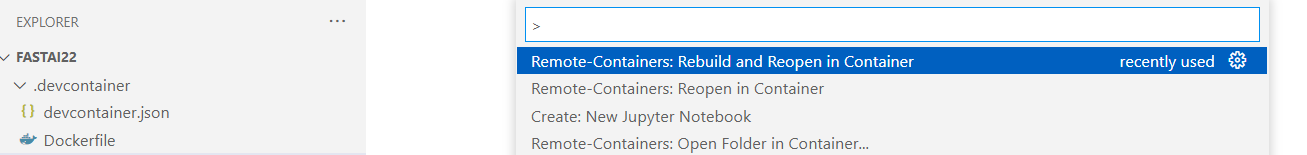

Now press F1 in VC to open the command palette and choose Remote-Containers: Rebuild and Reopen in Container

-

VC will run Docker with the linux image (based on the provided Dockerfile) with GPU support!

-

When the image runs you can open and run notebooks and scripts from the project directory. They will be executed in this remote container using the GPU when needed.

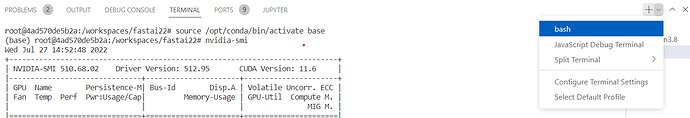

At the bottom in VC you can open multiple terminal sessions:

You can even use jupyter notebook by typing jupyter notebook in a new terminal session. Port 8888 is opened in devcontainer.json

However you can use VC just fine for your notebooks.

Changes you make to the notebooks will be saved to your project directory.

To get out of the remote container press F1 and choose Remote-Containers: Reopen Folder Locally

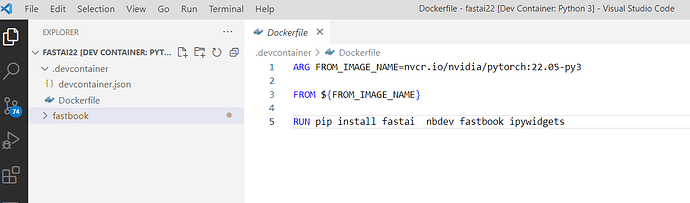

Dockerfile

ARG FROM_IMAGE_NAME=nvcr.io/nvidia/pytorch:22.05-py3

FROM ${FROM_IMAGE_NAME}

RUN pip install fastai nbdev fastbook ipywidgets

Devcontainer.json

{

"name": "Python 3",

"build": {

"dockerfile": "Dockerfile",

"context": "..",

},

// Configure tool-specific properties.

"customizations": {

// Configure properties specific to VS Code.

"vscode": {

// Set *default* container specific settings.json values on container create.

"settings": {

"python.defaultInterpreterPath": "/usr/local/bin/python",

"python.linting.enabled": true,

"python.linting.pylintEnabled": true,

"python.formatting.autopep8Path": "/usr/local/py-utils/bin/autopep8",

"python.linting.flake8Path": "/usr/local/py-utils/bin/flake8",

"python.linting.pycodestylePath": "/usr/local/py-utils/bin/pycodestyle",

"python.linting.pydocstylePath": "/usr/local/py-utils/bin/pydocstyle",

"python.linting.pylintPath": "/usr/local/py-utils/bin/pylint"

},

// Add the IDs of extensions you want installed when the container is created.

"extensions": [

"ms-python.python",

"ms-python.vscode-pylance"

]

}

},

"runArgs": ["--gpus" ,"all", "--ulimit", "memlock=-1", "--ulimit", "stack=67108864"],

// Use 'forwardPorts' to make a list of ports inside the container available locally.

"forwardPorts": [8888]

}