Hello everybody,

I am trying to predict a time-varying signal, which also has an on/off switch. I thought an RNN with an extra variable, to which I would feed the on/off state, could be a good match - but I’m having troubles even with an artificial toy example, as the RNN appears to be trying to fit both the “on” and the “off” periods, without using the extra variable.

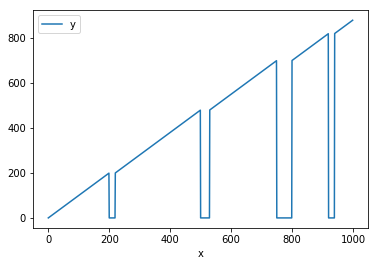

This is how my toy data looks like:

(the code to generate it is here)

I pre-process the data, to make sure it conforms to Keras format [samples, time steps, features] (the code is here). The training / test split is fixed, with “old” values being used for training and predictions for the test set are made recursively, feeding the previously predicted value(s) as inputs (except for the on/off state variable, for which the true value is used).

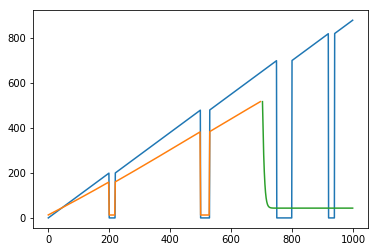

If I try to fit an RNN without the extra state variable, I’m getting what I expect to get (original data is in blue, training set predictions are in orange, and the test set predictions are in green):

So the RNN is able to start overfitting the train set, but utterly fails on the test set, predicting a constant value.

Then I proceeded to fit an RNN with the extra variable, combining an LSTM with the extra input which I pass through a tiny embedding (as it’s categorical - not sure whether this is needed, or doing what I hope it’s doing). The code is here.

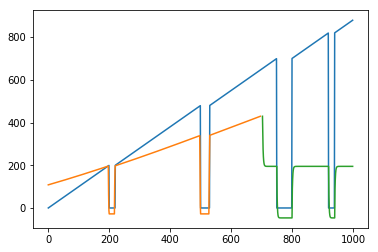

And this is what I end up with:

It appears that the extra variable is indeed being used, but it also seems that the RNN is still trying to capture all of the signal, therefore failing in much the same way as in the previous example.

Does anyone have any ideas on what could help solve this? I have a nagging suspicion that I’m making a silly mistake somewhere, but I just can’t find it…

Much thanks in advance,

Dmitrij