Hi all,

This is about training an RNN for Seq2Seq. If anyone has any ideas it would be a huge help!

Context

I’m attempting to adept the language translation model from lesson 11 to create a question generating model. The idea comes from a couple recent papers (for example: https://arxiv.org/pdf/1705.00106.pdf) where they show you can train an RNN to take in a sentence and output a question about the sentence. With this setup, the training data is sentence-question pairs, where the input is the sentence and the target is the question.

This is a seq2seq transformation, very similar to the French-to-English done in lesson 11, so I’m trying to adapt the code. I used the SQuAD dataset (https://rajpurkar.github.io/SQuAD-explorer/) and reformatted it to be a list of (sentence, answer) tuples.This gives me ~85,000 datapoints.

Progress

-I modified Jeremy’s model and uploaded this with my data here: https://github.com/drewserles/QuestionGen

-Everything runs OK, and I can make the model overfit the data and exactly reproduce the target, so I believe it’s expressive enough. I tested this by using a subset of the training data.

Where I’m stuck

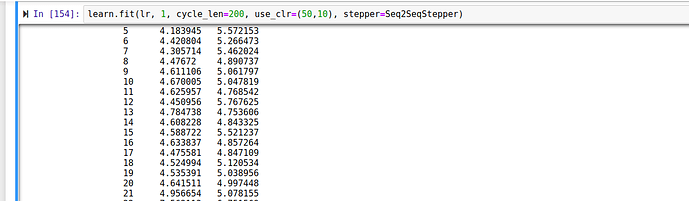

-I can’t get good results when trying to train on all my data. The training and validation errors get down to around 4 and then bounce up and down - I can’t seem to get them to continue to decrease.

-I have tried messing around with my dropout values and haven’t had any success. Here’s an example of how my training looks.

-I tried running this over night and didn’t get anything different.

Has anyone faced a similar issue? If you have recommendations It would be fantastic. Thanks so much.