Hello,

I am working on training RetinaFace model in tensorflow and facing interesting issue with landmarks. This model has two outputs: standard one - boxes for detected faces and their improvement - 5 landmarks for eyes, nose and mouth.

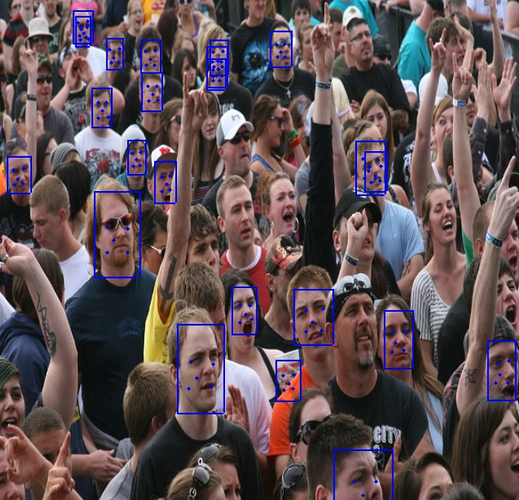

How it should look:

Landmarks regression is part of total loss. Some data has landmarks ground truth, some has only boxes for faces and no landmarks. When ground truth has no data I set the loss for landmarks to 0. While training the issue looks as landmarks overfit on bad predictions.

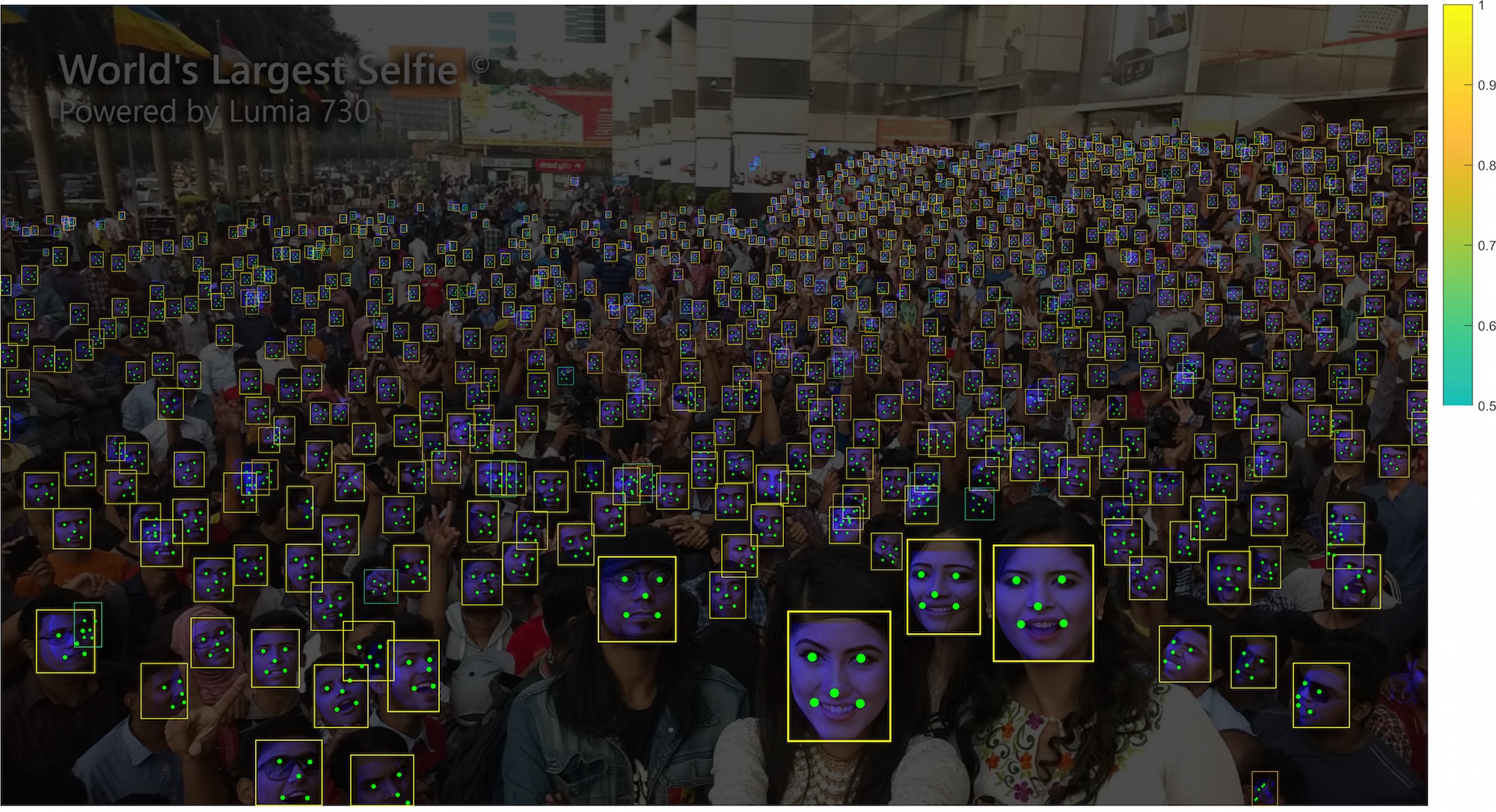

What I get:

Most of landmarks don’t learn and stay along the y=x line. The question is are there any standard ways to overcome big amounts of unlabeled data?

I thought about adding weight to landmarks, but now, when I wrote down the question, I have second thought and idea that loss = 0 is bad and I should try setting it high, so model won’t think that learning y=x is good result.

Any ideas and suggestions are welcome.