Hi,

I am running a multi-label classification image problem on a Fast.ai Paperspace instance.

Due to some problems I am facing with the quality of data not all images are fully loaded onto the computer (separate issue that I am working on).

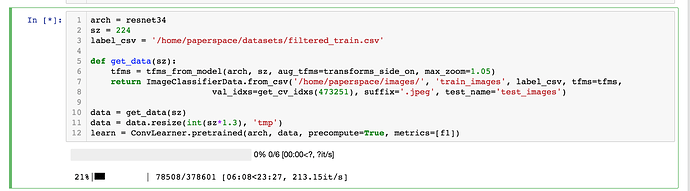

I am running this snippet:

arch = resnet34

sz = 224

label_csv = '/home/paperspace/datasets/filtered_train.csv'

def get_data(sz):

tfms = tfms_from_model(arch, sz, aug_tfms=transforms_side_on, max_zoom=1.05)

return ImageClassifierData.from_csv('/home/paperspace/images/', 'train_images', label_csv, tfms=tfms,

val_idxs=get_cv_idxs(473249), suffix='.jpeg', test_name='test_images')

data = get_data(sz)

data = data.resize(int(sz*1.3), 'tmp')

learn = ConvLearner.pretrained(arch, data, precompute=True, metrics=[f1])

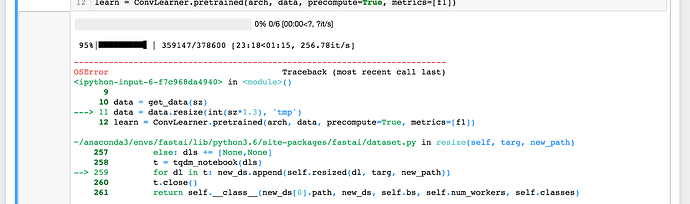

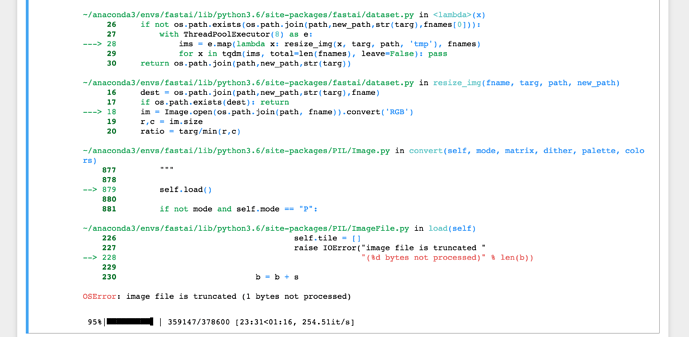

Whenever I encounter an image that is not fully loaded it crashes with these notices.

I run the “%debug” to identify the image and reprocess it but the bigger question is what I should do then? How do I recover safely from this?

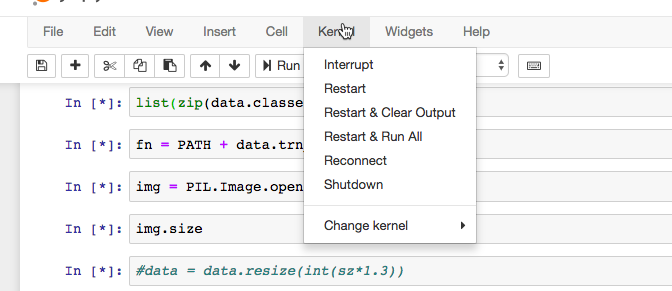

- Run the above snippet again?

- Delete the “tmp” folder or not?

- Restart the kernel or not?

Thanks!