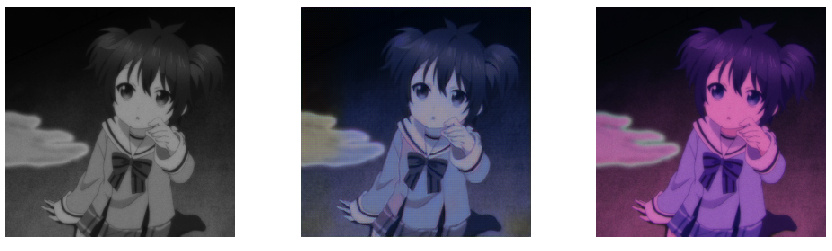

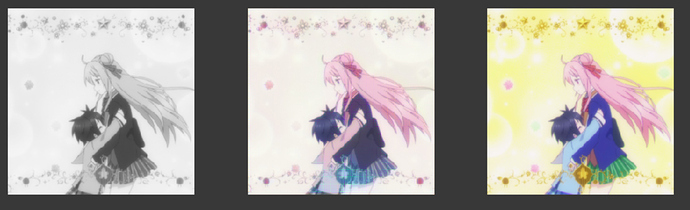

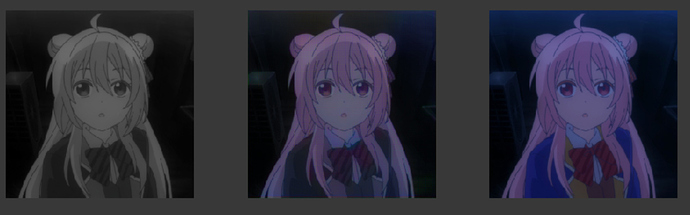

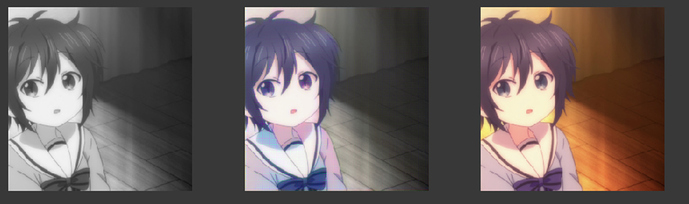

Black and white--------------------------------------------Predict ----------------------------------------Target

I don’t know what I should say for the predict. I like the predicted picture more than the target for this one.

.

I don’t know why. It is so wired. I have been experimenting this for many days.

First: I can not use image net VGG16_bh and Fastai’s R34 because it just does not work on my dataset or it does not work on Japanese Animation at all. When it does not work, it only pain the picture with pink and less pink. And I see Fastai’s learned Resnet model also has the same problem when I use learn.module.eval() and require_grad(r34, False).

To Be Honest, I love FastAI because it handles the Training Folder easier and quicker than plain Pytorch. However, I think the reason why the Resnet34 pre-trained does not work is because I do not know how to use the with_hooks function on fastai. Once I know how to use it and use Fastai’s Resnet model, I think it could be better.

I found out if the module can not really know how to use the hook_outputs on Fastai’s Resnet.

I know the current result is still not really great, but It gets better with R50 model.

When it fails:

It fails when using image-net’s pre-trained model or fastai’s R34(I think it is I don’t know how to extract feature from it)

I tried these with 10 eps, and I am not sure I should train it more or not.

Eventhough the style is very different, it still can recolor it:

One question I have for those recolorization work is whether working in the hue-saturation-value space (instead of rgb) would bring improvements ?

As you already have the value (black and white picture) its one less channel to predict, plus the color seem to often be very saturated in japanese anime which would make the saturation channel easy to predict. Meanwhile, errors in the last channel, hue, might not matter by that much for most targets.

I just started this project like few days ago. I am not a professional. Cloud you explain to me what hue-saturation-value space (instead of rgb) if you are using those terms? 4 days ago, I have no idea what recoloring or channel really mean.

But I can tell you as you keep doing it, you will learn a lot from it.

See! with another new approach, it gets better. Before this, it can not even color the red

bow.

Take a good look at the picture. If it does not understand the clothing, it will not recognize it.

This is just what I guess. I remember jason on youtube said it was because of some kind of gassium problem and I don’t really understand.

There are many easy ways to improve this.

The hue-saturation-value encoding is an alternative to rgb : https://en.wikipedia.org/wiki/HSL_and_HSV

You still have three channel but instead of encoding the red, green and blue (rgb), they encode the hue (color: blue or red), saturation (how strong is the color) and lightness (how dark/light is the color: the n&b information).

Its a space that has many good properties for color manipulation (being more natural than rgb), you should easily find python code to do conversion between rgb and hsv (also called hsl).

I can not answer. I think you are right because the image-net pre-trained model just does not work at all.

Did you see improvement from that?

Can I take a look on your recolorization work? I think it is great!

Let’s discuss the detail over message to avoid clutering this thread