Is this a correct way to find the iou of semantic segmentation?

def test_images_accuracy(model, raw_img_folder, label_folder, label_colors):

#get all of the camvid images from test set

raw_imgs_loc = list(glob.glob(raw_img_folder + "/*.png"))

label_len = len(label_colors)

iou = np.zeros((label_len))

for i, img_loc in enumerate(raw_imgs_loc):

print(i, ":caculate accuracy of image:", img_loc)

#convert the image to colorful segmentation map

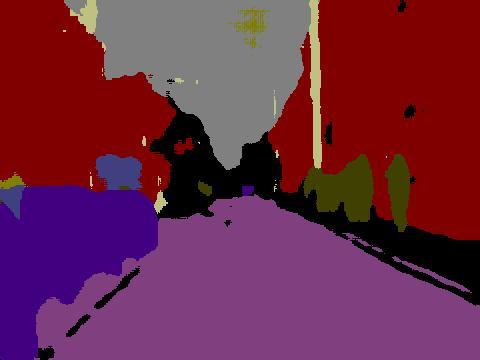

segmap = to_segmap(model, img_loc, label_colors)

#this is the true label

label = pil.Image.open(label_folder + "/" + img_loc.split("/")[-1][0:-4] + "_L.png")

label = np.array(label)

for i, color in enumerate(label_colors):

real_mask = label[:,:,] == color

predict_mask = segmap[:,:,] == color

true_intersect_mask = (real_mask & predict_mask)

TP = true_intersect_mask.sum() #true positive

FP = predict_mask.sum() - TP #false positive

FN = real_mask.sum() - TP #false negative

#print("TP, FP, FN", TP, FP, FN)

iou[i] += TP/float(TP + FP + FN)

return iou / len(raw_imgs_loc) * 100, mean_acc / len(raw_imgs_loc)

The results are very weird, it perform too well compare with the LinkNet paper.Left side is the results of my model, right side is the result of paper

iou of Sky is 84.4839562309 vs 92.8

iou of Building is 81.0601742644 vs 88.8

iou of Sidewalk is 60.76727697 vs 88.4

iou of Column_Pole is 75.3379892824 vs 37.8

iou of Road is 85.3882216306 vs 96.8

iou of Tree is 82.8543640992 vs 85.3

iou of SignSymbol is 78.8773600477 vs 41.7

iou of Fence is 79.7187205135 vs 57.8

iou of Car is 76.1308416568 vs 77.6

iou of Pedestrian is 76.3787193096 vs 57.0

iou of Bicyclist is 61.4157209066 vs 27.2

average iou: 76.579 vs 68.3

Probability

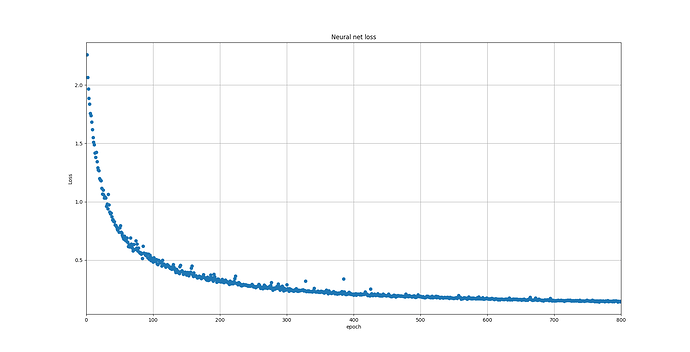

1 : overfit, since camvid dataset are very similar and small + I train with 800 epoch, this is not a surprise. I test it on video of youtube, it do not perform as good as camvid

2 : my network architectures is wrong

3 : the paper do not utilize(?) data augmentation(I use random_crop and horizontal flip)

4 : My hyper parameters are different with the paper, the paper train with 768 * 512, I train with 128*128

By the way, how to find iIoU?

Edit : Do anyone have the dataset of cityscapes?I do not have permission to download the dataset.