You may want to take a look at this repo. There are a couple of notebooks and code that may be useful.

Hi, I was trying to use the blended augmentations repo with my pretrained ResNext101 model (from pytorchcv model zoo). I want to use cnn_learner(data, base_arch, custom_head).ricap() or .blend(**kwargs) to add augmentations as my image dataset (100k images, 42 classes) is quite varied and I want my model to be more robust. However, fastai keeps raising this error: AttributeError: ‘Learner’ object has no attribute ‘blend’

I read that there is a difference between using cnn_learner() and Learner(). Is this the reason? But Jeremy said cnn_learner() is superior, is it worth using Learner() if I want the power of custom augmentations?

Thanks and cheers.

I realise this was a long time ago but in case you are still interested. I have just updated with a version which is compatible with the xtra_tfms() call github here. I have tested it on my end but not extensively so there may be some issues I am not yet aware of.

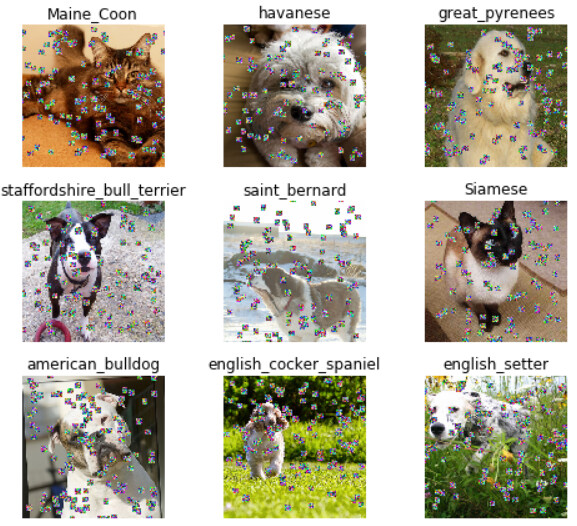

Secondly it seems very slow at the moment when sprinkles are being added in each batch. I first wanted to get it working but will look to try and optimise it next if I can.

Thanks for sharing… Even though this was a while ago, thanks for letting me know… I will keep it in mind for future computer vision experiments!

@rwightman Do you use this data augmentation technique in your experiments? And from your experience what works good in your experiments (in terms of data augmentation)?

@LessW2020 Thank you so much for providing us with such valuable information about the Progressive Sprinkles data augmentation. Your observation is interesting to read, but it is connected to image data. In the case of time-series data, which technique (Progressive Sprinkles, mixup, cutmix, or any other new method) would work best? And I’m also looking for genuine reasons why one of them can perform the best?