Hello All,

I am trying to recreate the IMDB example from lesson3.

I am using the IMDB_SAMPLE dataset.

When trying to load the csv file using:

data_lm = (TextList.from_csv(path, 'texts.csv', cols='text')

.split_from_df(col=2)

.label_from_df(cols=0)

.databunch(bs=bs))

…

after executing:

learn.lr_find()

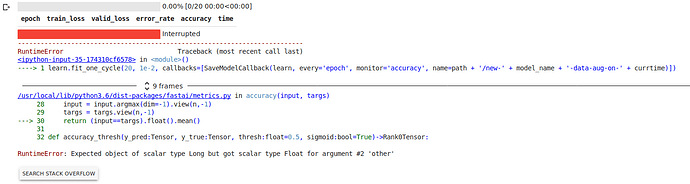

I get the following error:

Expected input batch_size (81792) to match target batch_size (48).

Also all GPU memory is used and the server gives a warning.

When using:

data = TextDataBunch.from_csv(path, 'texts.csv', bs=bs, num_workers=0)

After:

learn.lr_find()

I get the following error:

CUDA out of memory. Tried to allocate 2.68 GiB (GPU 0; 11.17 GiB total capacity; 8.94 GiB already allocated; 1.80 GiB free; 123.06 MiB cached)

I think this error is related to the error above.

When using the full IMDB dataset, which contains folders instead of a csv file and loading it using:

data_lm = (TextList.from_folder(path)

.filter_by_folder(include=['train', 'test', 'unsup'])

.random_split_by_pct(0.1)

.label_for_lm()

.databunch(bs=bs))

and calling learn.lr_find all is ok.

I’ve included a link which demonstrates the problem:

https://colab.research.google.com/gist/jj321/c71bade2e999c1806a085be965096f9a/imdb.ipynb

“Option 1” and “Option 2” give an error, and the section “This is ok” runs ok.

I’ve also tried other datasets (csv’s) to no avail.

I don’t know if it is relevant but i am using Google Colab.

Am i doing something wrong or is this a bug in the from_csv function?

Kind regards,

Jaap