Hi everyone,

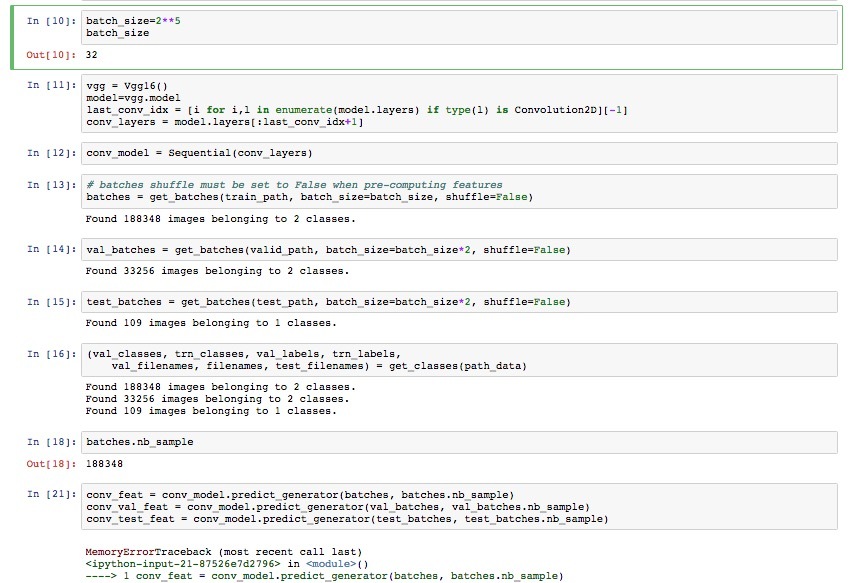

I’m trying to solve a problem similar to statefarm using the same approach of fine-tuning VGG. I have a lot of data (binary prediction with 100k images of each class) and I’m trying to precompute the outputs to the convolutional layers like Jeremy does in the statefarm notebook.

This works fine when I use a 10% sample of my dataset but I get an immediate memory error when I try to run this line:

conv_feat = conv_model.predict_generator(batches, batches.nb_sample)

My code is pretty much verbatim copied from statefarm.ipynb but I can’t figure out how to fix this darn MemoryError. I can only suspect it’s that I have too much data but I was under the impression that the data is being processed in batches by the generator?!

Please help