ok, now please copy and paste the output of losses[:3] and losses2[:3]. Thanks!

print(losses[:3])

tensor([6.5066, 6.1514, 5.9205])

print(losses2[:3])

tensor([6.5066, 6.1514, 5.9205])

Ok, your losses are of the same shape of the planet dataset’s (btw, you don’t need to provide data.c).

plot_multi_top_losses() should work out of the box. Are you sure you are using fastai 1.0.42 (or newer)?

If you do have 1.0.42 or newer, please tell me where to download the dataset. I’ll conduct extensive experiments, keeping you posted.

Dear Andrea,

Thank you so much for your contribution!

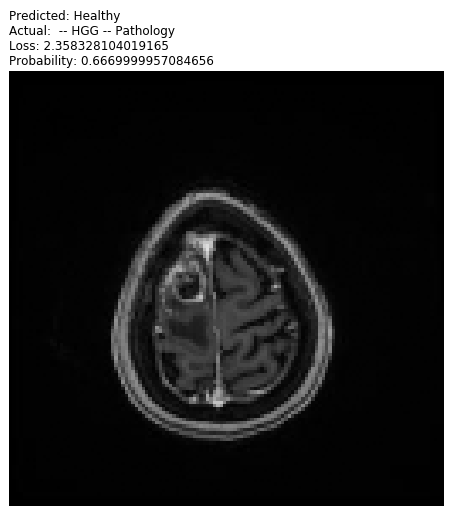

If I may, I’m in need for your help. I’ve tried your function and followed your instructions, but still I was unsuccessful to plot my top losses. The error I received was RAM out of memory. Any ideas?

Take care,

Sapir

You are welcome. Can you post a screenshot of the error? Apart from this, can you check the memory occupation status as the error manifest itself? Do you have a swap partition? More generally, what is your hardware setup?

Thanks.

Thank you, @SapirGershov.

In line 152 the method tries to gather all the informations it collected by using the ClassificationInterpretation api, which in turn calls Pytorch internals. Now, I never encountered such an error, but it doesn’t seem to be related with GPU memory shortages. More probably, it’s a ram problem.

How much ram did you install in your pc? Can you try and replicate the error upon a machine with more ram?

Dear Andrea

Following my previous emails, i’m trying to run plot_multi_top_losses,

new with fast.ai v 1.0.48

Running:

data = (src.transform(tfms, size=256)

.databunch().normalize(imagenet_stats))

learn.data = data

data.train_ds[0][0].shape

.

.

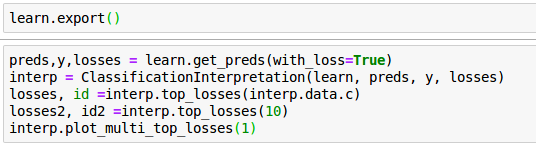

learn.export()

preds,y,losses = learn.get_preds(with_loss=True)

interp = ClassificationInterpretation(learn, preds, y, losses)

losses, id =interp.top_losses(interp.data.c)

losses2, id2 =interp.top_losses(10)

interp.plot_multi_top_losses(3)

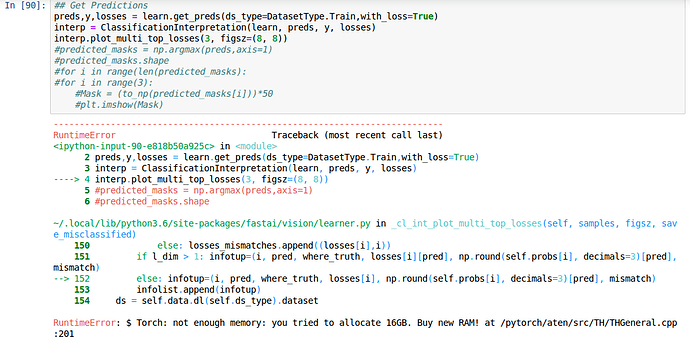

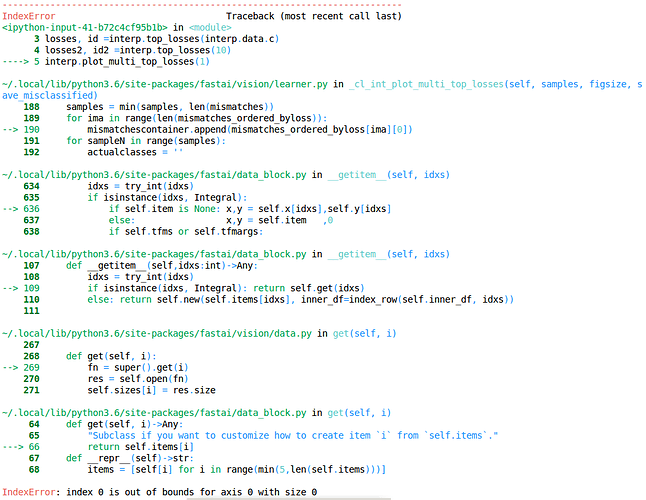

I get the following error message

Is there a problem with my input / software update related? and by the way, is there now any option to plot the multi confusion matrix?

Many tanks

Moran

My losses are of the same shape of the planet datasets

preds and y variables are at equal size

Do you have any idea why it went wrong or how to debug the problem?

Thanks

Moran

Sorry for the delayed response, I’m having a bad flu.

Now, as you may see, the problem starts at line 189 as it constructs the list of mismatched examples.

The next instruction makes reference to fastai datablock api, so my hypothesis right now is that they changed it a bit, and that broke plot_multi_top_losses().

But I could very well be wrong. As soon as I recover just a bit, hopefully within a couple of days, I’ll try and address the problem.

Thanks!

Sorry I bothered you

Thanks a lot, and get well soon ![]()

Not at all, thanks for helping me in detecting bugs ![]()

Hi

Hope you are feeling better!

And i think it works

The problem was that a test data with very simple task

This results with only 1 misclassified sample.

Whereas i tried to plot the 3 top losses

interp.plot_multi_top_losses(3)

Assignment of 1 solved the problem

Regards the confusion_matrix

Is there anyway print / plot it as multi_confusion_matrix?

Many thanks again for the help and I apologize for the many questions,

I’m working on medical image analysis and really excited by that that things work very well !

Ah, perfect! ![]()

It has yet to be implemented. It wouldn’t be too difficult to implement, having a bit of time at hand, though…

Hi!

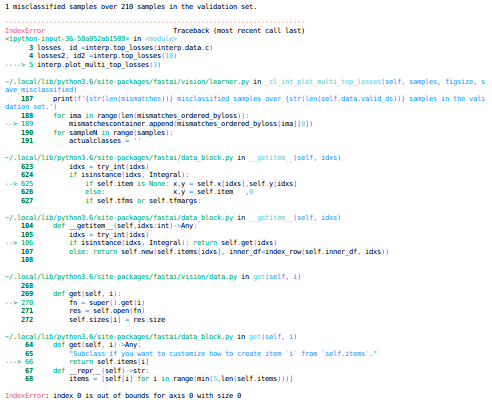

I upgraded fast.ai to 1.0.52, and now having again problems the interp.plot_multi_top_losses(1)

Any chance its not compatible with v 1.0.52?

Thanks!

Moran

Hi! I’ll check as soon as possible. Meanwhile, you could try and run the planet notebook with 1.0.52 as a rapid check.

Hi Andrea,

Is there any way i can delete images from interp.plot_multi_top_losses.

Thanks in advance.

Hi! What do you mean exactly by deleting?

Hi Andrea,

Thank you for implementing the plot_multi_top_losses in vision. I have a question regarding the output of this method. It returns prediction/actual/loss/prob . What this prob is?

Is it a probability of the predicted class or probability of the actual class in the prediction?

It seems to be the probability of the predicted class from the documentation here https://docs.fast.ai/vision.learner.html#_cl_int_plot_multi_top_losses but from the results i get, it seems like the probability of the actual class in the prediction(like in the plot_top_losses method). Could you please clarify?