In the lesson 2 of Intro to ML course OOB score is introduced.

Initially to get a prediction for one sample in the validation set, we took predictions from all the trees and took the average of this prediction. This validation set was not used for training and held out.

When we have smaller dataset it may not be feasible to keep a separate validation set.

So we’re taking all the rows that were not used for training a tree into a separate set and doing it for each of the trees and getting a separate validation set for all the trees.

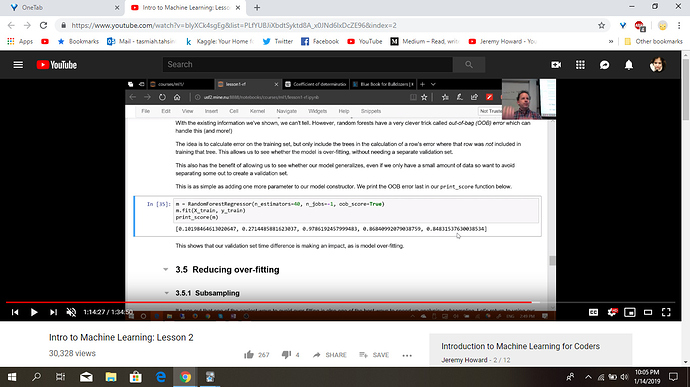

What we could do is to recognize that in our first tree, some of the rows did not get used for training. What we could do is to pass those unused rows through the first tree and treat it as a validation set. For the second tree, we could pass through the rows that were not used for the second tree, and so on. Effectively, we would have a different validation set for each tree. To calculate our prediction, we would average all the trees where that row is not used for training. If you have hundreds of trees, it is very likely that all of the rows are going to appear many times in these out-of-bag samples. You can then calculate RMSE, R², etc on these out-of-bag predictions.

So are we combining these validation sets into a larger validation set and then for each sample in this set we use only the trees that didn’t use this sample to train themselves and average their prediction?

Or are we going over all the samples in the training set and again for each of the sample in the training set we use those trees that did not use this sample for training, take the predictions and average them?

Which data set is being used for prediction?

@jeremy Does it even sound right?