Well, I did test that. On WSL 2, I set to num_workers=-1 on Ryzen 7 3700X and it took like a minute and half. For the fastai 0.7.0 ML course linear regression in lesson 2 or 3.

Maybe I am missing something that accounts for my relative GPU slowness. In the instructions up top under " Setup Ubuntu – fastai2, item 1 has a link to a forum post, but it seems to be broken (gives an " Oops! That page doesn’t exist or is private." message). Is there some alternative?

On Friday this should be unlocked for everyone. Until then perhaps might want to reiterate the information @FourMoBro?

@muellerzr Rather than reiterate, maybe you can move the thread from “Part1 2020” to “Users”?

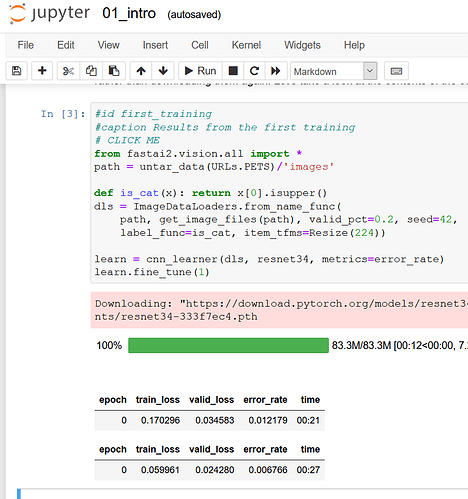

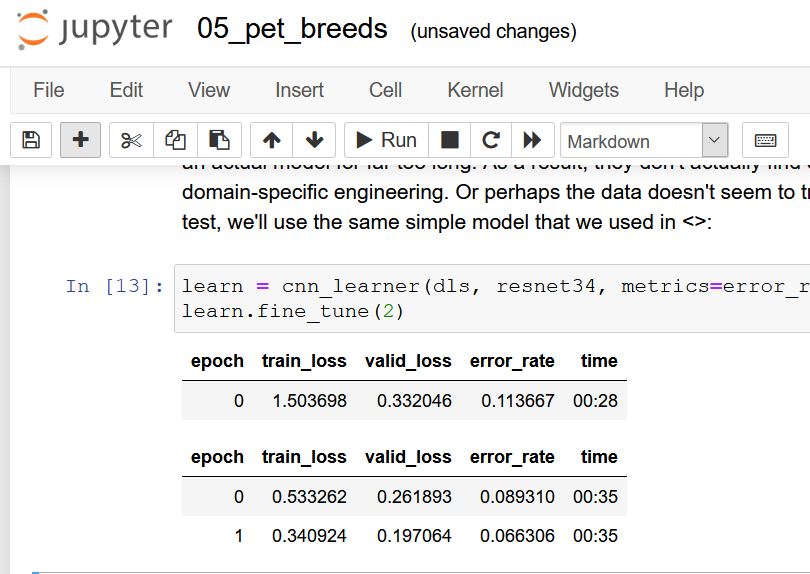

With the formal release of fastai version 2 and the version4 of the course today. I decided to add a new environment with the updated libraries. I followed my general steps above to create an Ubuntu 20.04 setup within WSL2. Then i installed fastai using the instructions on gihub. The resulting performance is more than acceptable using the GPU!

I don’t know if other people had this experience, but in windows I can only set num_workers to be 0, otherwise I have exceptions. Usually I have difficulty saturating my GPU because the CPU seems to be the bottleneck because all CPU cores are not used.

In WSL2 with GPU enabled, I get worst performance on GPU, but since I can use num_workers=8, the performance ends up better in Linux in Windows…

Fitting a VAE model:

- Windows + num_workers=0

- 1min 40s per epoch

- Linux + num_workers=0

- 2 min per epoch

- Linux + num_workers=8

- 58s

Because Linux can be parallelized with num_workers, it ends up much much faster even though the GPU is 15% slower.

It his bug with num_workers been fixed in Windows? Because when I try to increase it I still get an exception.

Thanks,

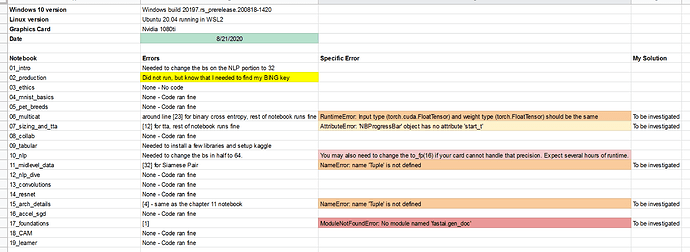

Using the notebooks released on 8/21, I am happy to say that the code in the notebooks ran fine, as is, using this WSL2 setup. There were a couple exceptions where there was a library missing from my conda environment, or i needed to setup kaggle again, but the point being is that WSL2 is more than capable in running the course notebooks. In the image below, I noted if i changed any of the provided notebook code (so far just batch size for NLP work) or if i encountered any errors. The errors may have been resolved in the forums but I have yet to investigate or implement them. I just like to run the notebooks to get any downloaded data out of the way and see if everything works before tackling the subject of the day.

Are you able to use JIT compiled functions in WSL2?

Seems like if I use Swish from fastai I get the following exception in WSL2 but not in Windows:

RuntimeError: The following operation failed in the TorchScript interpreter.

Traceback of TorchScript (most recent call last):

RuntimeError: CUDA driver error: PTX JIT compiler library not found

Just saw this:

- PTX JIT is not supported (so PTX code will not be loaded from CUDA binaries for runtime compilation).

from here: https://docs.nvidia.com/cuda/wsl-user-guide/index.html#known-limitations

So today I loaded the latest updates to the OS. I am now at:

Windows 10 Build 20211.rs_prerelease.200904-1619, using

WSL kernel 4.19.18, and

NVIDIA driver 460.15 which was released on 9/2.

From the nvidia site, this is supposed to have " Support for PTX JIT. This will allow CUDA developers to run PTX code on WSL2. Note that unlike Native Linux, in WSL we load PTX JIT from the Driver Store directly, so you will not find the library in your lib folders." So @etremblay you may want to upgrade and give it shot.

Re-running the same notebooks from 3 weeks ago, I notice a few seconds gain (20s down to 18s) in speed on the first image classifier which is 10%. 10% seems like a big boast considering its just few seconds. So I continued onto the text classifier. My time dropped from over 10mins for the first epoch to under 8mins. So that is like 20% gain. But, running the NLP notebook, my first epoch was 13sec slower using this code. That is .7% slower. A more controlled experiment could yield better times, but the notebooks run, as is, without complaints.

In summary, I couldn’t be happier with WSL2 and this setup, although I will benchmark a 30series card in the coming weeks provided they don’t sellout!!!

Thanks for keeping us posted. It’s valuable information.

Have you tried a multi-gpu setup with WSL2?

Warning!

Please be aware that versions of Windows Insider Builds including 20226 and the more recent 20231 break WSL2 and Cuda! Do not try to use it! See the github issue WSL2 & CUDA does not work [v20226].

You should not update to Windows 10 Builds after 20221.!

Updates:

- Build 20221 is no longer available from the Windows Insider web site. If you have not already downloaded an ISO it might be helpful if you could let people know and share it. If you know where to get an earlier version, please let people know!

- As of 11-October, the current build, 20231, does not fix the problem.

- NVIDIA has not updated their guidance yet, As if 11-October, it says “CUDA on WSL2 is not to be used with the latest Microsoft Windows 10 Insider Preview Build 20226 due to known issues. Please revert to an older build 20221 to use CUDA on WSL2”.

If you are going to try to use WSL2 and Cuda in early October 2020, then you should check to see if the issue has been resolved. If it hasn’t, then you should use Windows 10 Build 20201.

I have successfully installed Windows 10 Build 20221 after a frustrating experience trying to diagnose the problem with Build 20226.

Having moved to the older build, I’m happy to say that Docker images on WSL2 can see and use the GPU.

NOT fixed in 20231 (i just tried it), so stay on 20221 for now

edit CUDA drivers are up to version 460.20 if you want to download them

Supposedly fixed in 20236 per https://blogs.windows.com/windows-insider/2020/10/14/announcing-windows-10-insider-preview-build-20236/

Downloading now and will let the forums know when complete.

EDIT It seems to be working. Upgrade away if you want to!

Hi, have you encountered any problems with the network connection on WSL2? My current speed on wsl2 (windows 20251) is 30kb/s (12MB/s is real speed on windows 10). Before, when I was running wsl2 on windows 19042, I was able to solve it by turning ‘Large Send Offload’ off in the vEthernet adapter configuration. However, I do not have a vEthernet adapter (automatically created for wsl2) present when running wsl2 on windows 20251. Thus, I did not find any viable way to solve this issue. I think I can turn large send offload when using the ethernet connection (I was using wi-fi all the way).

P.S. thank you for the guide.

Seems like WSL2 can connect to the internet only via ethernet ( not wifi).

@FourMoBro As of today how is multi-GPU training using WSL2? Can you train using PyTorch DDP? It seems that the issue in Windows is lack of NCCL support. Does WSL2 address this or work around it?

I have not tried to do multi-GPU within WSL2. I would be more than happy to test it if someone provides the code in notebook form using a fastai dataset.

How can one minimize the amount of GPU compute/memory other tasks on Windows are taking? For example, tasks like Desktop Window Manager and Windows Driver Foundation are taking between 6-7% of the GPU utilization along with 1K MB of the memory.

Thank you very much for the sharing. fastai2 finally could run on my laptop. Several additional instructions need to be executed to make it work for my laptop.

1, pip install fastai --upgrade

2, pip install nbdev

3, modify fastai2 to fastai in the code