Mount your gdrive with

from google.colab import *

drive.mount('/content/gdrive', force_remount=True)

Then use !cp to copy.

Mount your gdrive with

from google.colab import *

drive.mount('/content/gdrive', force_remount=True)

Then use !cp to copy.

I think there is an easier way. Just downloading data on gdrive itself from the beginning and working with data there, without any need to copy it. And after training , "file_paths=top_loss_paths’ shows the path of images that causes the top loss which can again be easy to handle from gdrive directly.

Hi,

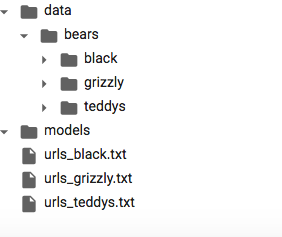

I clicked on back,grizzly and teddys folders each and pressed upload button but my urls_black.txt, urls_grizzly.txt and urls_teddys.txt files are not showing up under any of those folders, they just show up under models and therefore I can’t download images per instructions provided by Jeremy.

Thanks,

Nasim

You can move the url files with shutil module. My colab NB defaults to the directory /content, which is where the url files appear to be. import shutil and then move the files with

shutil.move('/content/urls_black.txt/', '/content/data/bears/black/urls_black.txt')

.

Then they will be in the right place. Or, change the directories as needed.

Error related to lesson3:

! conda install -y -c haasad eidl7zip

and I got the below error:

/bin/bash: conda: command not found

Any help is appreciated.

You can also use bash mv (or any shell command) in the notebook by prefixing with a !.

So !mv <source> <destination> will do the trick.

Colab does not use conda so that command does not work.

To install 7zip use !apt-get install p7zip-full, but you should not need to since the colab environment comes with it preinstalled.

You can test it in the notebook with !7za to see the usage output.

Right-Click on the data folder, you can see a upload file. there you upload the files. I hope this helps.

supposed to be https instead of http:

!curl https://course-v3.fast.ai/setup/colab | bash

anybody make it work running the Filedeleter() or the new one ImageDeleter(), I got disconnected on the first and runtime error on the later

Does anyone know if there is a way to save the model offline and load them later? If I do

learn.save("stage-1")

How can I save that outside of colab so I can load the weights if I start a new kernel?

You can find your saved models in data/[project]/models/ in the sidebar file viewer, then just right click on the file and choose download.

If you have mounted your google drive then just !cp the files there.

One issue I’m running into is that when I’m running the

path = untar_data(URLs.IMDB_SAMPLE)

path.ls()

The directory it’s saved in isn’t in data/[project]/models/ but rather

[PosixPath('/root/.fastai/data/imdb_sample/texts.csv'),

PosixPath('/root/.fastai/data/imdb_sample/tmp')]

Which doesn’t show up in the sidebar in Colab. Is What would be the best workaround for this, if I want to download the data to data/[project]/models/

If you have run the colab fastai install script then /content/data/ should be symlinked to /root/.fastai/data/ and show up in the sidebar.

The /[project] directory is created from the notebook when running

path = Config.data_path()/'project'

path.mkdir(exist_ok=True)

or automatically when running

path = untar_data()

The /models directory is automatically created when you run learn.save()

I think I see what the problem is, I wasn’t running

!curl https://course-v3.fast.ai/setup/colab | bash

In my notebook. Thanks!

Following up with this question: the learner doesn’t seem to be saved under models in the files tab, I’ve refreshed and resaved the model multiple times.

In this case the /models dir is “hidden” in the /images dir.

Found it, thanks!

Hello,

When I use the ImageDeleter, I keep getting a runtime error. Not sure if it is something just happening in colab. Or is there some way to use it different on there. Any help is appreciated. Thank you