This is off-topic and a crazy idea. I was avoiding posting this, but sometimes the pressure of ideas swirl my mind and don’t quiet until I let them out. ![]()

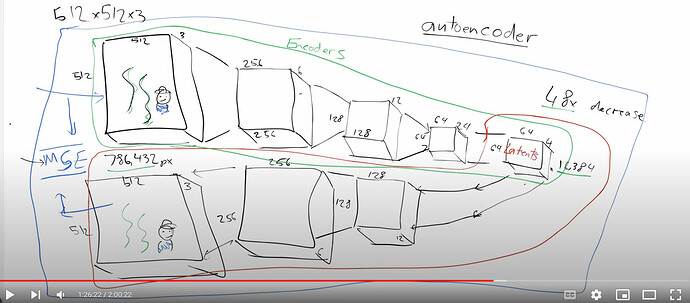

With the understanding my brain is randomly pattern matching limited data, I was pondering the following diagram from Lesson 9:

-

Neural networks are probablistic.

-

Quantum mechanics is probabilistic.

-

Nature tends to evolve to the “most efficient” solution, so information compression may form part of the universe’s underlying representation. “What if” fundamental particles (or larger objects) exist as a compressed “latent”.

-

The universe “runs” in latent-space.

In a way similar to Stable Diffusion, vacuum energy forms a noisy background on which these latents are decoded, in a way consistent for all viewers each time-step (see recommended reading). -

Experiments like the double-slit where observation affects the results occur because that provdies an extra factor in the latents denoising.

-

Mass slows down time due to a concentration of latents-denoising.

That is all. I feel better now.

p.s. recommended reading - Fall, by Neal Stephenson