Hey @atul.

Short answer: it was renamed to config_from_instance.sh

Long answer: I’m just in the process of refactoring this, writing a blog post about it, and putting it in the wiki. Until then, you can refer to this medium post: https://medium.com/slavv/learning-machine-learning-on-the-cheap-persistent-aws-spot-instances-668e7294b6d8

@slavivanov - Thx. I’m going to give it a try. Looks a bit intimidating to begin with. :-/

I think the reason it looks intimidating is that I might have overdone it with the fine details (wanted it to be as clear as possible).

I’m getting errors as below:

FYI – I created the VPC in Step 1.2 of your Medium post.

. ec2-spotter/fast_ai/start_spot_no_swap.sh --ami ami-bc508adc --subnetId subnet-XXXX --securityGroupId sg-YYYYY

Waiting for spot request to be fulfilled...

usage: aws [options] <command> <subcommand> [parameters]

aws: error: argument subcommand: Invalid choice, valid choices are:

bundle-task-complete | conversion-task-cancelled

conversion-task-completed | conversion-task-deleted

customer-gateway-available | export-task-cancelled

export-task-completed | instance-running

instance-stopped | instance-terminated

snapshot-completed | subnet-available

volume-available | volume-deleted

volume-in-use | vpc-available

vpn-connection-available | vpn-connection-deleted

Waiting for spot instance to start up...

list index out of range

Spot instance ID:

list index out of range

list index out of range

Spot Instance IP:@atul it seems like your aws cli installation doesn’t support aws ec2 wait spot-instance-request-fulfilled which is very weird. Can you try to upgrade it?

Also even though it displayed an error your Spot instance should have been launched.

@slavivanov – Hmmm I updated the awscli, and tried again.

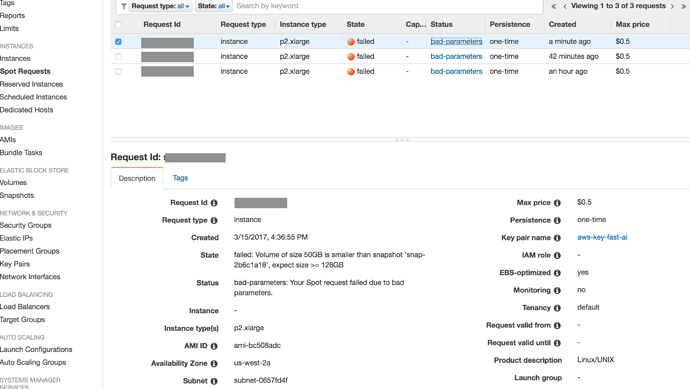

See attached images: When I logged into Console.AWS, I see an error for the Spot Instance that was being created, but failed: The error: (see image attached)

**failed: Volume of size 50GB is smaller than snapshot 'snap-2b6c1a18', expect size >= 128GB**

** Status**

** bad-parameters: Your Spot request failed due to bad parameters.**

Your script failed again, but with this new error:

. ec2-spotter/fast_ai/start_spot_no_swap.sh --ami ami-bc508adc --subnetId subnet-0657fd4f --securityGroupId sg-6e6e6e16

Waiting for spot request to be fulfilled...

Waiter SpotInstanceRequestFulfilled failed: Waiter encountered a terminal failure state

Waiting for spot instance to start up...

^C

Spot instance ID:

I pressed Ctrl-C here

An error occurred (MissingParameter) when calling the CreateTags operation: The request must contain the parameter resourceIdSet

@slavivanov – OK, made some progress… though I dont know how much.

-

Changed the default Volume in your script from 50 to 128 (as AWS was complaining… see above).

-

Ran the

start_spot_no_swap.sh. It worked. Logged into it using ssh.

**BUT: – Did not find any fast.ai courses source code there. **

Then, using Step 3.1 from your Medium post, I ran the sh ec2-spotter/fast_ai/config_from_instance.sh. That gave an error below:

sh ec2-spotter/fast_ai/config_from_instance.sh

usage: aws [options] <command> <subcommand> [<subcommand> ...] [parameters]

To see help text, you can run:

aws help

aws <command> help

aws <command> <subcommand> help

Unknown options: i-0fd47cabf6cd8d534

TERMINATINGINSTANCES i-0fd47cabf6cd8d534

CURRENTSTATE 48 terminated

PREVIOUSSTATE 80 stopped

TERMINATINGINSTANCES i-09e3045dc39c67773

CURRENTSTATE 32 shutting-down

PREVIOUSSTATE 16 running

Waiting for volume to become available.

Waiter VolumeAvailable failed: The volume 'vol-0d097dd15fe013a7d' does not exist.

All done, you can start your spot instance with: sh start_spot.sh

So - it managed to stop/terminate the currently running spot instance, but failed at something else?

So net-net - I’m not sure how to proceed from here.

Thanks again for your help so far.

Hey @atul, thanks for letting me know about the 128GB limit. I’ve updated the code to reflect this.

For your second question: The fast.ai ami does not include the notebooks code. I believe this is because they change frequently. Just get them by running: git clone https://github.com/fastai/courses

As for the third problem, the instance was probably not named properly. This might have been caused the initial script having issues. Can you verify in the AWS console that you have an instance named fast-ai-gpu-machine? Alternative approach would be to pass an instance id to the config_from_instance.sh like so:

sh config_from_instance.sh --instance_id i-0fd47cabf6cd8d534

Make sure you download the latest code off github first.

@slavivanov Thx - I got the new ec2_spotter scripts from git. The instance started… BUT looks like it had old info.

(FYI - I previously used Jeremy’s script to create a non-spot instance of p2.xlarge due to all the frustrations with this ec2_spotter). So ssh was not connecting to the newly created Spot instance complaining with “Remote Host Identification change”)

Looks like I have to re-do the whole thing (from Step 1) now

@atul If you connect to the instance before the swap is complete, and then after the swap, ssh will give that error. This will fix it:

@slavivanov - At this juncture - I honestly dont know if my setup at AWS is OK or not to run ec2_spotter.

The ami instance I created with Jeremey’s setup_p2.sh script is terminated (by the ec2_spotter’s config_from_instance.sh) . I also killed/terminated the spot instances that start_spot.sh had started, bcos of SSH problem. (Yes, I can remove that offending entry from the .ssh_hosts…)

…But I’m wondering if I should re-do the whole thing. If I were to re-do, where would one start? From Step 1.3?

I’m assuming I can reuse the security group/subnet I had created earlier.

@atul Sorry this is taking so long, it gets easier down the line

You should have a good looking my.conf so you can just try sh fast_ai/start_spot.sh and see what happens. If it doesnt work, start with part 1.3 and then jump to 3.1

Hi @slazien –

I am getting the same error that you got with nvidia-smi.

How did you fix it? Thanks

Hey! Did you see the message “dpkg was interrupted, you must manually run ‘sudo dpkg --configure -a’ to correct the problem.”? You could try doing what is says, that’s how I fixed it. I think the problem was that dpkg was still configuring some packages while I terminated a spot instance, with that corrupted package info transferring onto the new spot instance. If you start a new spot, let it run for a couple of minutes, just ro make sure.

Hi,

Just came across your post and looking forward to trying it. I have been developing something for the same purpose using docker containers on a non-boot volume. I created a separate post for this:

How did you find working with AWS? I found it rather painful!

@slazien - Thx for your note.

Sorry, I didn’t understand.

Did you run Jeremy’s install_gpu.sh script to update all the packages for Nvidia?

Or did you run something else?

Thx

No, I didn’t run that or anything else. Just sudo dpkg --configure -a and it worked.

Just noticed the wiki page and the blog post, great job and thanks again!

As a side note, I suspect the spot price bidding in my region went from the boring $0.2 to $0.6-0.9 because so many people are using your script suddenly ![]() No complain though, I think it’s great that anyone can take advantage of this technologies. For me, I just switch the instance type to g2.2xlarge whenever I need to for smaller jobs. I really like the flexibility in your approach. So thank you again!

No complain though, I think it’s great that anyone can take advantage of this technologies. For me, I just switch the instance type to g2.2xlarge whenever I need to for smaller jobs. I really like the flexibility in your approach. So thank you again!

Hey @slazien - I’m still running into the same issue - could you detail a little bit more how you solved it? I went through the spot instance setup, and everything seems to work, except when I boot into the spot instance, I get the same error from nvidia-smi.

Hi:

Setting up aws on Cygwin, I keep getting this error:

$ aws

C:\Users\bella\Anaconda2\python.exe: can’t open file ‘/cygdrive/c/Users/bella/Anaconda2/Scripts/aws’: [Errno 2] No such file or directory

I couldn’t find the threads to resolve this issue. What shall I do?

Thanks,

Rhonda