It’s a long time since I was in this code and I cannot remember precisely what I found out in the end, sorry… Can you point me to the precise point where you are having difficulties and I will see if I have made any notes in my version of the notebook.

For Lesson 12, in the CIFAR10-Darknet notebook, every line runs fine until I go to fit the custom built architecture which then yields the following error:

RuntimeError: one of the variables needed for gradient computation has been modified by an inplace operation

Thanks @airborneinf82 . That helped me refresh my memory, and now I remember that I did not get a resolution to it, apart from not using the inplace operation, as discussed in Kens post

Ahh thank you! I totally over looked that post! I will give that a try here in a bit.

Hello all and @jeremy

I’m kind of stuck on my project, I’m reaching 80% accuracy but I think I can do better, my data is unbalanced, I would really like to try a GAN to augment my data, I’m almost there. I have followed Lesson 12 but I have 3 blocking points in each of these posts of mine:

If someone could guide me by replying into the specific thread, thanks a lot for your help.

That was the trick! Fantastic, thanks. Can’t believe I over looked that post!

Great - glad to have been helpful!

To save memory; he answers this in the video.

I am unable to run the cifar10-darknet .ipnb fully on my local eGpu ( TitanXP-- Macos 10.13.6)

After 2-3 rounds of training data my GPU heated up and shut down.

I have reduced the batch size(64) as well but that helped also.

Since the image size is 32*32, So i thought it should work, but not able to build fully.

Can someone help me on this.

Looks like only option left is that i need to go to the AWS or Google.

Any suggestion or help please.

@Even Well said. Your words are practical and motivating. Thank you.

I was tinkering with the wgan notbook and decided to try not training the discriminator more times (5X and occasionally 100X) than the generator. So I changed the following in train(train(niter, first=True))

def train(niter, first=True):

#d_iters = 100 if (first and (gen_iterations < 25) or (gen_iterations % 500 == 0)) else 5

d_iters = 1 # training ratio of discriminator: generator is 1:1

print(f'Loss_D {to_np(lossD)}; Loss_G {to_np(lossG)}; '

f'D_real {to_np(real_loss)}; Loss_D_fake {to_np(fake_loss)}')

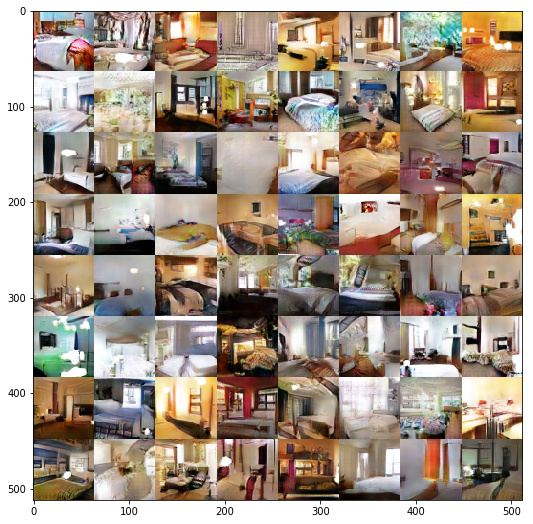

It seems to train the WGAN faster. In the first 5 iterations one can get quite respectable fake images.

train(5, False)

Anyone knows if doing this will lead to worse mode collapse or memorization or whatever GAN problems that Ian Goodfellow admonishes about?

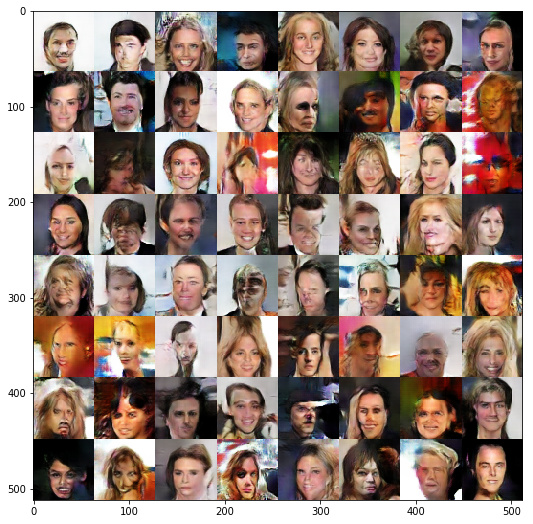

Edit: unfortunately on celebA dataset the glaring deficiency shows up quite starkly at 10 iterations.

train(5, False)

set_trainable(netD, True)

set_trainable(netG, True)

optimizerD = optim.RMSprop(netD.parameters(), lr = 1e-5)

optimizerG = optim.RMSprop(netG.parameters(), lr = 1e-5)

train(5, False)

Thanks for that but I am having a new error.

TypeError: No loop matching the specified signature and casting was found for ufunc add

Then I set learn.metrics = []

and everything works fine.

I also saw someone using pytorch 0.4 will work too

In cyclegan notebook, I got stuck at optimization process saying following error:

RuntimeError: cuda runtime error (2) : out of memory at c:\anaconda2\conda-bld\pytorch_1519501749874\work\torch\lib\thc\generic/THCStorage.cu:58

When I met this error, I followed the theory of decreasing batch-size and it worked.

However, bs is already 1 in this case, and I met same error.

Is there anything to make it work?

after setting learn.metrics=[] the issue solved for me too, but can you explain what was the reason for solving that particular issue when defined metrics?

cant find the reason

This could solve the error but what if we want to see the accuracy. which is not possible if we define the metrics as null list. if we cant find the accuracy how could we say that we have reached at the best for our model.