Hello,

I am trying my hand at practicing the exercises in Lesson 1. To do this, I picked up the task of classifying music genres. I wanted to try 2 approaches:

- Can the model look at sheet music notation and identify what genre the song belongs to?

- Can the model look at the audio spectrogram and identify what genre the song belongs to?

For approach 1)

Training set size: 600 images/genre

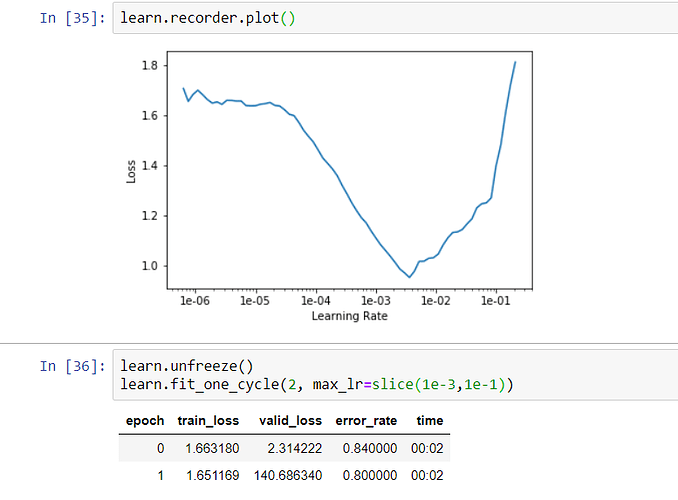

I downloaded some well labeled guitar sheet music belonging to the genres such as ‘rock’, ‘pop’, ‘classical’, ‘blues’, ‘jazz’. This approach gave me an accuracy of 35% using restnet34. This was after adjusting the learning rate.

I used about 600 sheet music examples per genre

For approach 2)

Training set size: 25 images/genre

I created spectrograms for songs using a tool called spek. After creating and labelling the spectrograms, I passed it through the model and got a dismal 20% accuracy.

I am curious to know if there is a better approach to solving this classification problem.