Hello everyone,

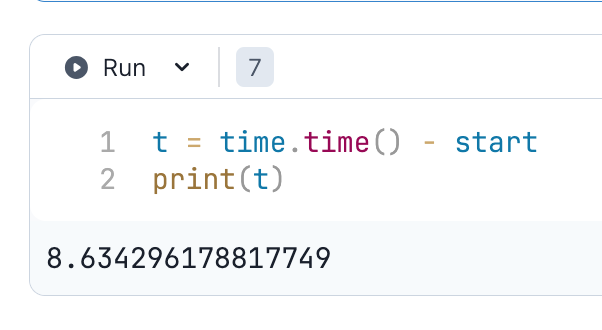

Can I ask if this is normal? Or is paperspace just slow? You can see that it takes 426 seconds / 7minutes.

I have a A6000 instance and I have moved huggingface’s .cache folder to /storage.

Thank you all.

Hello everyone,

Can I ask if this is normal? Or is paperspace just slow? You can see that it takes 426 seconds / 7minutes.

I have a A6000 instance and I have moved huggingface’s .cache folder to /storage.

Thank you all.

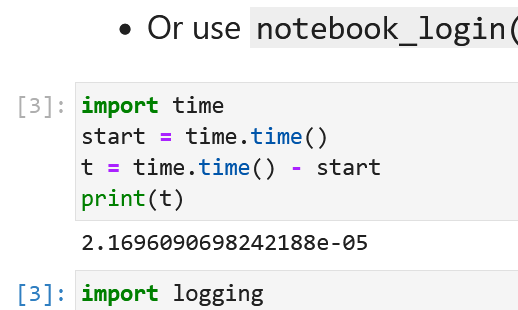

I’m not at this point in the course yet so I don’t have experience with this notebook but I just tried it on a Free-A4000 (45 GB RAM, 8 CPU, 16GB GPU) in Paperspace with notebook_login and it took about 9 seconds.

OK, that’s not an apples to apples comparison. However, I tried it for fun. This A6000 machine is in microseconds.

OK, interesting. This new A6000 instance is much faster at just under 2 minutes. I have no clue what’s happening. Maybe we should extract which CPU-model is running. Or maybe it’s some vCPU with a time-slicing system.

Hi @TheSaladMonster,

In the last few days, we’ve been undergoing planned maintenance which would affect networking speeds across the platform. We hope to get this finished and resolved very soon so there won’t be any more interruptions to service.

In the future, you can track our active status across our products here: https://status.paperspace.com/

Thanks @joshua-paperspace for the update

Hi,

Try: time.time safetensors format:

https://huggingface.co/docs/diffusers/using-diffusers/using_safetensors

The Paperspace has got the most popular datasets/weight ‘images’ to mount in /datasets directory. When you initialize a VM in their ‘Jupyter’ interface, there is a section ‘datasets’ → public → ->click on diffusers or SDXL → mount.

Then in terminal ls datasets to get full paths of the Safetensors files or pass to txt file:

find / -name "*.safetensors" 2>/dev/null > safetensors_paths.txt

vim ./safetensors_paths.txt

Load into pipeline using:

from diffusers import StableDiffusionXLPipeline, StableDiffusionXLImg2ImgPipeline

import torch

import os

import datetime

g = torch.Generator(device="cuda")

start_st = datetime.datetime.now()

pipeline_text2image = StableDiffusionXLPipeline.from_single_file(

"/datasets/stable-diffusion-xl/sd_xl_base_1.0.safetensors", torch_dtype=torch.float16, variant="fp16", use_safetensors=True

).to("cuda")

load_time_st = datetime.datetime.now() - start_st

gen_start_st = datetime.datetime.now()

prompt = "A brazilian young female model on the backseat of the car, 8k"

image = pipeline_text2image(prompt=prompt, num_inference_steps=50, generator=g).images[0]

image

gen_time_st = datetime.datetime.now() - gen_start_st

print(f"Loaded safetensors {load_time_st}")

print(f"The time taken to gen image at A4000 {gen_time_st}")

#Loaded safetensors 0:00:03.743833

#The time taken to gen image at A4000 0:00:20.074452

Never have done such a mess on the forum, but in my time zone is 5 a.m. I’m sorry.

image.show()

There was an output! #second run, after restarting kernel was ~4 sec.

Have a nice day/ good night!