I saw your code and found it to be very helpful. I was having problems training the model on Colab, but after seeing your notebook I was inspired to give it another try.

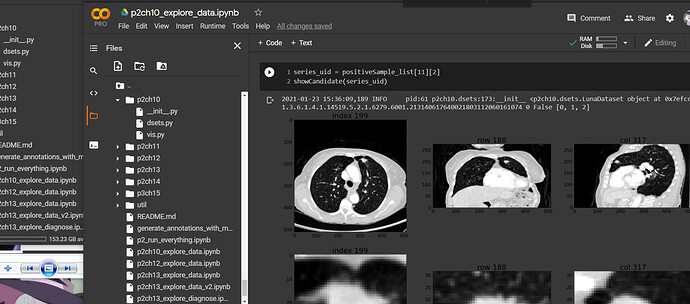

I don’t think there’s anyway to train the entire dataset on a Colab instance. As I mentioned earlier, I’m storing the entire dataset in a GCP bucket, which can be mounted to a Colab instance. I was able to get the model to start training without crashing the Colab instance, but the only way to do this was to reduce the number of workers to 1 (from 4), and decrease the batch size to 8 (from 32). Of course, this slowed down training, and with the latency of reading in data from a bucket on the other side of the world, the estimate for finishing one training epoch was 72 days (no joke). It also costs $.12 USD to transfer 1 GB from a GCP bucket, so it would cost about $10 to transfer the entire dataset.

I am able to run training on a virtual machine in GCP, and I can do an epoch of training on the full dataset in about 20 minutes. To reduce latency, I copy the dataset from my GCP bucket to the VM’s data disk, but that takes 3-4 hours. As far as I know, you have to have the data available locally, so I think this is a required step. I was only able to do this with a VM with 8 vCPUs, 30 GBs RAM, and a Tesla T4. I had tried earlier with a VM with less processing power, but the estimate for training one epoch with that VM was about 2 days. Unfortunately, I don’t think you can change the specs of a GCP VM after it’s been instanced, so every time I want to change something I have to start from the beginning.

For what it’s worth, I was able to train an epoch of the entire dataset on my local machine (Core i7/16GB RAM/GeForce GTX 1060 with 6GB) in a reasonable amount of time (I think it was around an hour); but the impression I get from the book is that this should take about ten minutes. Considering that my local machine is fairly powerful and that I’m not making any changes to the code, I don’t understand why training is taking so long to run.

I also have an issue with the amount of disk space this project requires. Running the program “training.py” in chapter 11 calls “prepcache.py”, which creates a cache which is even bigger than the dataset. Between the original dataset and this cache, nearly 300gbs of my hard drive are taken up, which is more than I can spare. As far as I can tell, the book doesn’t really cover what’s happening in prepcache.py, and I found the code for it difficult to follow. I’ve never seen anything like this in any of the models I’ve built in the past, so I’d like to know why this step is necessary and how it makes the model more efficient.

I do realize that it’s possible to run training on just a single subset of the data, but I’m going to continue to try with the full dataset merely because I want the experience of having to work with a massive dataset; and because I’m convinced that it shouldn’t be that difficult (it’s in a beginner’s book, after all).

That said, I find the style of part 2 very different from part 1. Part 1 was a lot like the instruction in FastAI: you’re encourage to go through the code line by line and understand exactly what’s happening in every step. But in part 2, the code feels very opaque and difficult to follow. Most of the code calls helper functions which are tucked away in other files, which themselves call helper functions that are tucked away in other files. I appreciate that this is more efficient from a developer’s perspective; but from a student’s perspective I don’t feel it’s optimal for teaching.