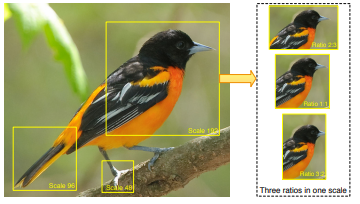

Hey everyone, for the past few months I’ve been working on implementing NTS-Net into fastai. The paper is here: https://arxiv.org/abs/1809.00287

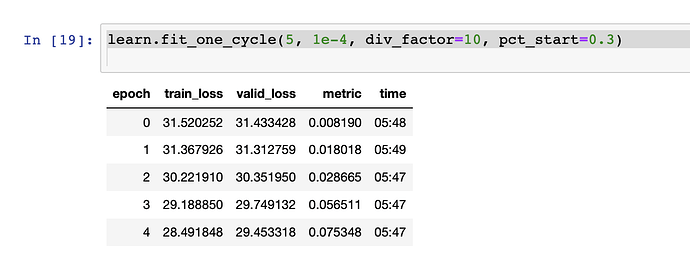

Essentially it is a method of using bounding boxes without labeling any on image classification to help with fine-grained subject matter. In this (very) narrow show of what it can do, I compared how a standard resnet50 did against the NTS-Net, both pretrained but I did not do freezing/unfreezing, as I am working on this currently. The resnet got 78% accuracy in 4 epochs, while the NTS-Net got 82%. When it came to a snake classification I have been working on, it performed even better, showing a 10% improvement overall. The next steps are to enable us to use the split() (should be done here in the next day or two), and otherwise I invite everyone that want’s to experiment to try a few ideas!

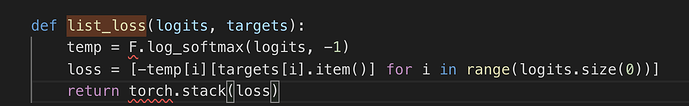

In the paper, the only ablation study they performed was on the number of boxes formed. Nothing about the size, using Jeremy’s size-up technique, etc. It’s something I’d like to look into myself and plan on but I would like to hear of other ideas as well for fine-tuning this powerful model (currently as of the paper holds the highest accuracy in regards to the UCLA Birds dataset). I already saw an improvement when I included LabelSmoothingCrossEntropy, but I am curious on a few other things as well that I would like opinions on. I feel the loss function is too harsh, when it comes to determining how everything is laid out. Anyone have ideas? I’d like to weight poor choice of focus less than that of a prediction wrong, as the two should be fairly well correlated. In any case! Let me know any questions, the github will be updated constantly with improvements towards implementing in fast.ai.

Here is the PETS notebook for an example:

The source code I have been using is from two different places, one the original implementation, and the other from the pytorchcv library.

Please let me know of any issues, again the split() and gradual unfreezing should be solved in the next day or two. Thank you very much guys and thank you to those who’ve helped me get to this point. This was my first pure pytorch implementation in fastai.

it should be NTSNet now. Give me a moment to double check that was the only change

it should be NTSNet now. Give me a moment to double check that was the only change