Summary: I have been watching the new fast.ai NLP course, and I thought of using a whatsapp conversation I am a part of as training data. I could not find a Dutch language model, so I used Jeremy’s notebook to train a wiki model to use for transfer learning.

Afterwards, I used this Dutch wiki model to train on my whatsapp data so I could try to make a classifier that predicts who a certain message is from, but also to try and generate text.

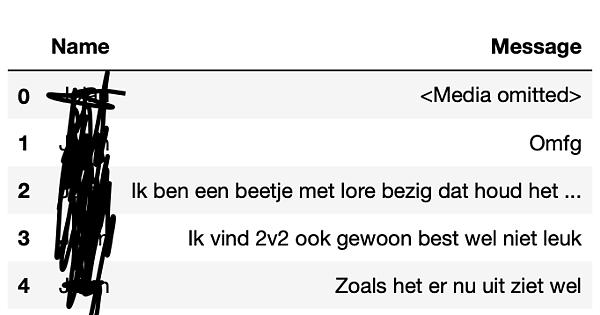

The data is about 35k messages sent over whatsapp from early 2017 until about two weeks ago. The group contains 3 people. Mostly Dutch, but also contains a decent amount of English text mostly related to video games. Unfortunately I cannot share this dataset since it contains a lot of personal data and some passwords which will be very tricky to filter out. The csv looks like this:

My notebooks are here. I can upload the Dutch language wiki model if people are interested. I only trained a single epoch since it took 2hr30 to finish one.

I am posting this to maybe help people along that are interested in this, but also to ask some questions:

-I ran my wiki download on a paperspace p6000 server. Jeremy’s notebook looked to take about 30 minutes to train on the whole English wiki, I do not quite understand why mine took 2hr30

-My classifier was not very good. This is understandable since the three people in the whatsapp group use very similar language. However, I trained in a few different ways and my result always resembled this: https://imgur.com/a/piZzBix where 90% of the validation set was attributed to one person. My dataset is sorted by name which afaik should not be a problem, but I cannot figure out why this keeps happening.

This has been a fun project to work on so far and I will keep spending time on it. Hope this post is helpful for people trying to do related work.