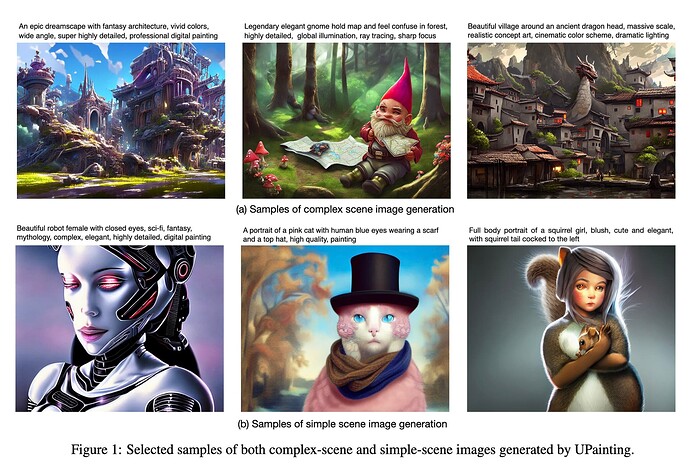

At least based on the results shown in the paper (not that you should be taking that to be the norm …) this seems to give some great looking results ![]() The authors claim that people rated images generated by UPainting to be more visually pleasing than those generated by Stable Diffusion …

The authors claim that people rated images generated by UPainting to be more visually pleasing than those generated by Stable Diffusion …

The difference in the approach (vs. Stable Diffusion) seems to be in using a text-image matching component. I’d be interested to try this approach out to see how well it performs in general scenarios.

The paper is here:

Here’s some sample output as mentioned in the paper itself (no code exists at the moment, so I can’t test it out myself):