Hello,

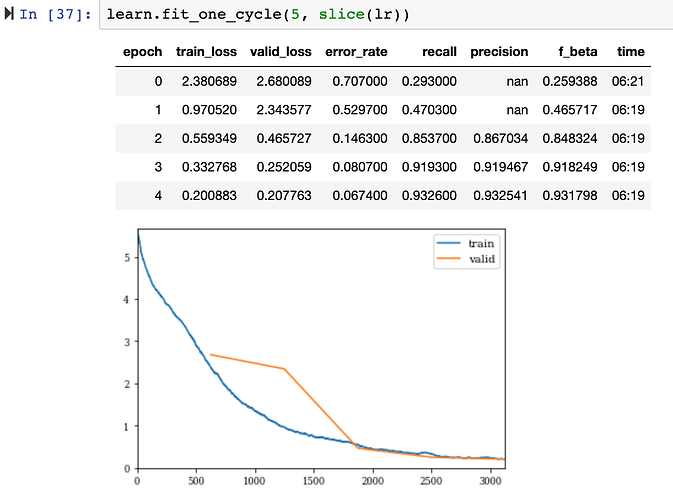

I am seeing NaN values during the early epochs of my training when using prec = Precision(average='macro') as a metric. I am doing a multi-classification with image data using resnet34.

For the above figure, the y-axis is loss, and the x-axis is number of batches processed.

Why does NaN still appear after the first epoch?

I am assuming I am getting a NaN because there is a zero divide some where. This post makes mention of a NaN value when:

Whenever there is a class in a batch with no images then you would divide by 0 and therefore get nan.

I am confused by this because the Precision class calculates the precision at the end of the epoch, seen in the source code. Is this correct?

Can someone help me work through where I am going wrong?