I picked up the problem to segregate Cheetahs from Leopards as my daughter found it difficult to do so and suggested it.

The data set was close to 100 black and white photos of each and validation set of around 20 each. I chose black and white mainly because the colours in the googled images were not genuine and i did not want the system to get wrong learnings. Also I wanted to make the challenge tougher.

The results I got were quite decent given the data set was not big and also not of great quality. I got most of the times around 85% accuracy and sometimes 90%.

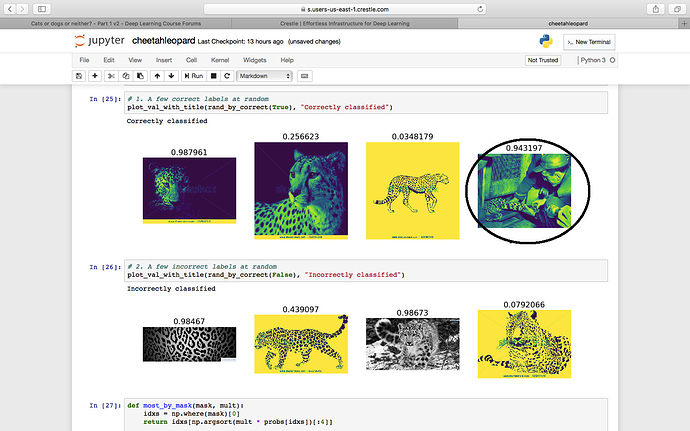

The most astonishing thing was that it classified a very difficult image (circled image -

where only a partial part of the leopards body is visible) EVERY TIME CORRECT!!! Even a very experienced wildlife photographer friend of mine was a bit confused but the machine got it 100% correct.But it made mistakes with some very obvious images were the spots / rosettas were very clearly visible.

I am very intrigued by the behaviour of this…

I don’t know why my B&W images became monochromic colour images.

Also another problem i faced was that my learning rate finder output could not be plotted. I got a blank plot, however many times i tried.