Hi! I’m trying to train a model on images with multiple coordinates in them and I’m pretty lost on how to do it correctly. I’m creating a digit sequence detector and I have labeled the training images with coordinates of each digit as below:

I tried to replicate the notebook of lesson 3 Head Pose problem, but I have not managed to create a Databunch with multi-coordinate labels. The file names and labels are in a df:

'img' 'tensors'

0000.png tensor([[63.,58.],[63.,211.],[57.,375.],[74.,501.],[59.,645.],[77.,800.]])

0001.png tensor([[64.,112.],[56.,418.],[73.,596.]])

... ...

My below attempt at creating a Databunch gives a warning when given a tensor with multiple coordinates.

def get_label(x):

vals = df.loc[df['img']==x.name]['tensors'].values[0]

# returns a tensor such as tensor([[64.,112.],[56.,418.],[73.,596.]])

return vals

data = (PointsItemList.from_folder(path_images)

.split_by_rand_pct()

.label_from_func(get_label)

.databunch(bs=16)

)

You can deactivate this warning by passing

no_check=True.

/usr/local/lib/python3.6/dist-packages/fastai/basic_data.py:269: UserWarning: It’s not possible to collate samples of your dataset together in a batch.

Shapes of the inputs/targets:

[[torch.Size([3, 150, 1000]), torch.Size([3, 150, 1000]), torch.Size([3, 150, 1000]), torch.Size([3, 150, 1000]), torch.Size([3, 150, 1000]), torch.Size([3, 150, 1000]), torch.Size([3, 150, 1000]), torch.Size([3, 150, 1000]), torch.Size([3, 150, 1000]), torch.Size([3, 150, 1000]), torch.Size([3, 150, 1000]), torch.Size([3, 150, 1000]), torch.Size([3, 150, 1000]), torch.Size([3, 150, 1000]), torch.Size([3, 150, 1000]), torch.Size([3, 150, 1000])], [torch.Size([2, 2]), torch.Size([8, 2]), torch.Size([7, 2]), torch.Size([2, 2]), torch.Size([7, 2]), torch.Size([4, 2]), torch.Size([7, 2]), torch.Size([3, 2]), torch.Size([4, 2]), torch.Size([3, 2]), torch.Size([4, 2]), torch.Size([3, 2]), torch.Size([6, 2]), torch.Size([2, 2]), torch.Size([7, 2]), torch.Size([2, 2])]]

warn(message)

The dataloader works perfectly fine when only one of the coordinates is given and not the full list, so I’m doing something wrong with formatting of the input values. Below I have modified the label function to only return the first coordinates and it gives no error.

def get_label(x):

vals = df.loc[df['img']==x.name]['tensors'].values[0][0]

# returns a tensor such as tensor([64.,112.])

return vals

data = (PointsItemList.from_folder(path_images)

.split_by_rand_pct()

.label_from_func(get_label)

.databunch(bs=16)

)

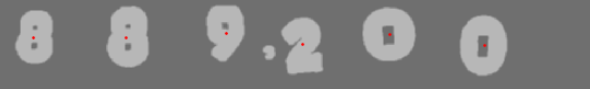

data.show_batch()

![]()

Any ideas what I’m missing? Thanks a bunch!