I am reading this paper: https://arxiv.org/abs/1705.01583.

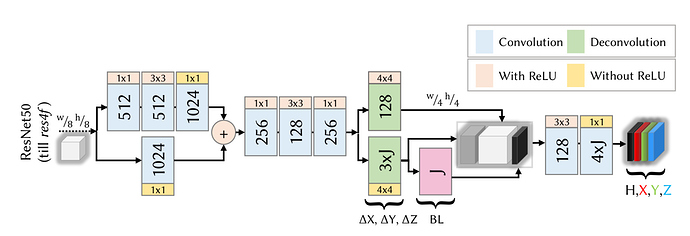

This network does 2D and 3D pose estimation at the same time. The output layer H is 2D pose estimation and X,Y,Z are layers that provide 3D pose. The problem is, the network has 2 distinct data sets for 2D and 3D pose. H has one data set and X,Y,Z has another.

The paper does not go in detail about how it was trained. Can you give and ideas on how it can be done?