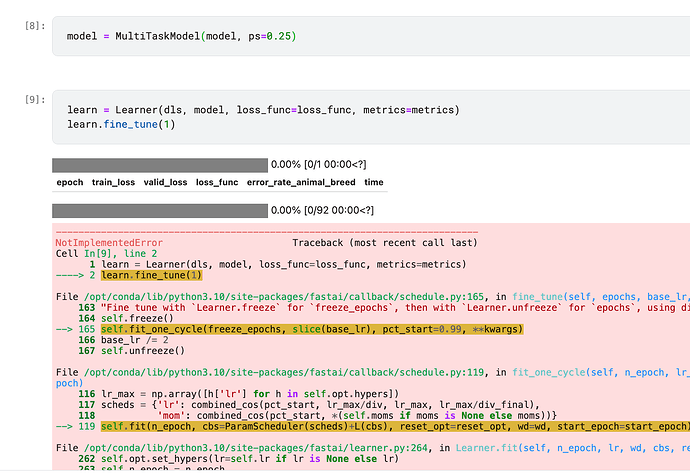

yes the part you mentioned works but when i go to train part :

learn = Learner(dls, model, loss_func=loss_func, metrics=metrics)

learn.fine_tune(10)

i got this error

---------------------------------------------------------------------------

NotImplementedError Traceback (most recent call last)

Input In [10], in <cell line: 3>()

1 #learn.fit_one_cycle(0,lr=1e-2)

2 lr = 0.001

----> 3 learn.fine_tune(0, lr)

File ~/.local/lib/python3.8/site-packages/fastai/callback/schedule.py:165, in fine_tune(self, epochs, base_lr, freeze_epochs, lr_mult, pct_start, div, **kwargs)

163 "Fine tune with `Learner.freeze` for `freeze_epochs`, then with `Learner.unfreeze` for `epochs`, using discriminative LR."

164 self.freeze()

--> 165 self.fit_one_cycle(freeze_epochs, slice(base_lr), pct_start=0.99, **kwargs)

166 base_lr /= 2

167 self.unfreeze()

File ~/.local/lib/python3.8/site-packages/fastai/callback/schedule.py:119, in fit_one_cycle(self, n_epoch, lr_max, div, div_final, pct_start, wd, moms, cbs, reset_opt, start_epoch)

116 lr_max = np.array([h['lr'] for h in self.opt.hypers])

117 scheds = {'lr': combined_cos(pct_start, lr_max/div, lr_max, lr_max/div_final),

118 'mom': combined_cos(pct_start, *(self.moms if moms is None else moms))}

--> 119 self.fit(n_epoch, cbs=ParamScheduler(scheds)+L(cbs), reset_opt=reset_opt, wd=wd, start_epoch=start_epoch)

File ~/.local/lib/python3.8/site-packages/fastai/learner.py:264, in Learner.fit(self, n_epoch, lr, wd, cbs, reset_opt, start_epoch)

262 self.opt.set_hypers(lr=self.lr if lr is None else lr)

263 self.n_epoch = n_epoch

--> 264 self._with_events(self._do_fit, 'fit', CancelFitException, self._end_cleanup)

File ~/.local/lib/python3.8/site-packages/fastai/learner.py:199, in Learner._with_events(self, f, event_type, ex, final)

198 def _with_events(self, f, event_type, ex, final=noop):

--> 199 try: self(f'before_{event_type}'); f()

200 except ex: self(f'after_cancel_{event_type}')

201 self(f'after_{event_type}'); final()

File ~/.local/lib/python3.8/site-packages/fastai/learner.py:253, in Learner._do_fit(self)

251 for epoch in range(self.n_epoch):

252 self.epoch=epoch

--> 253 self._with_events(self._do_epoch, 'epoch', CancelEpochException)

File ~/.local/lib/python3.8/site-packages/fastai/learner.py:199, in Learner._with_events(self, f, event_type, ex, final)

198 def _with_events(self, f, event_type, ex, final=noop):

--> 199 try: self(f'before_{event_type}'); f()

200 except ex: self(f'after_cancel_{event_type}')

201 self(f'after_{event_type}'); final()

File ~/.local/lib/python3.8/site-packages/fastai/learner.py:247, in Learner._do_epoch(self)

246 def _do_epoch(self):

--> 247 self._do_epoch_train()

248 self._do_epoch_validate()

File ~/.local/lib/python3.8/site-packages/fastai/learner.py:239, in Learner._do_epoch_train(self)

237 def _do_epoch_train(self):

238 self.dl = self.dls.train

--> 239 self._with_events(self.all_batches, 'train', CancelTrainException)

File ~/.local/lib/python3.8/site-packages/fastai/learner.py:199, in Learner._with_events(self, f, event_type, ex, final)

198 def _with_events(self, f, event_type, ex, final=noop):

--> 199 try: self(f'before_{event_type}'); f()

200 except ex: self(f'after_cancel_{event_type}')

201 self(f'after_{event_type}'); final()

File ~/.local/lib/python3.8/site-packages/fastai/learner.py:205, in Learner.all_batches(self)

203 def all_batches(self):

204 self.n_iter = len(self.dl)

--> 205 for o in enumerate(self.dl): self.one_batch(*o)

File ~/.local/lib/python3.8/site-packages/fastai/learner.py:235, in Learner.one_batch(self, i, b)

233 b = self._set_device(b)

234 self._split(b)

--> 235 self._with_events(self._do_one_batch, 'batch', CancelBatchException)

File ~/.local/lib/python3.8/site-packages/fastai/learner.py:199, in Learner._with_events(self, f, event_type, ex, final)

198 def _with_events(self, f, event_type, ex, final=noop):

--> 199 try: self(f'before_{event_type}'); f()

200 except ex: self(f'after_cancel_{event_type}')

201 self(f'after_{event_type}'); final()

File ~/.local/lib/python3.8/site-packages/fastai/learner.py:216, in Learner._do_one_batch(self)

215 def _do_one_batch(self):

--> 216 self.pred = self.model(*self.xb)

217 self('after_pred')

218 if len(self.yb):

File ~/.local/lib/python3.8/site-packages/torch/nn/modules/module.py:1130, in Module._call_impl(self, *input, **kwargs)

1126 # If we don't have any hooks, we want to skip the rest of the logic in

1127 # this function, and just call forward.

1128 if not (self._backward_hooks or self._forward_hooks or self._forward_pre_hooks or _global_backward_hooks

1129 or _global_forward_hooks or _global_forward_pre_hooks):

-> 1130 return forward_call(*input, **kwargs)

1131 # Do not call functions when jit is used

1132 full_backward_hooks, non_full_backward_hooks = [], []

Input In [5], in MultiTaskModel.forward(self, x)

22 def forward(self, x):

---> 23 features = self.encoder(x)

24 class1 = self.fc1(features[-1]) # Assuming the last layer's output is used

25 class2 = self.fc2(features[-1]) # Assuming the last layer's output is used

File ~/.local/lib/python3.8/site-packages/torch/nn/modules/module.py:1130, in Module._call_impl(self, *input, **kwargs)

1126 # If we don't have any hooks, we want to skip the rest of the logic in

1127 # this function, and just call forward.

1128 if not (self._backward_hooks or self._forward_hooks or self._forward_pre_hooks or _global_backward_hooks

1129 or _global_forward_hooks or _global_forward_pre_hooks):

-> 1130 return forward_call(*input, **kwargs)

1131 # Do not call functions when jit is used

1132 full_backward_hooks, non_full_backward_hooks = [], []

File ~/.local/lib/python3.8/site-packages/torch/nn/modules/container.py:139, in Sequential.forward(self, input)

137 def forward(self, input):

138 for module in self:

--> 139 input = module(input)

140 return input

File ~/.local/lib/python3.8/site-packages/torch/nn/modules/module.py:1130, in Module._call_impl(self, *input, **kwargs)

1126 # If we don't have any hooks, we want to skip the rest of the logic in

1127 # this function, and just call forward.

1128 if not (self._backward_hooks or self._forward_hooks or self._forward_pre_hooks or _global_backward_hooks

1129 or _global_forward_hooks or _global_forward_pre_hooks):

-> 1130 return forward_call(*input, **kwargs)

1131 # Do not call functions when jit is used

1132 full_backward_hooks, non_full_backward_hooks = [], []

File ~/.local/lib/python3.8/site-packages/torch/nn/modules/module.py:201, in _forward_unimplemented(self, *input)

190 def _forward_unimplemented(self, *input: Any) -> None:

191 r"""Defines the computation performed at every call.

192

193 Should be overridden by all subclasses.

(...)

199 registered hooks while the latter silently ignores them.

200 """

--> 201 raise NotImplementedError(f"Module [{type(self).__name__}] is missing the required \"forward\" function")

NotImplementedError: Module [ModuleList] is missing the required "forward" function.