Hi all,

After going through tne lectures 8 and 9, I wanted to create a model for pupil detection. In order to do that, I have annotated approximately 200 images, written the functions to transform the annotation .json files that contain two points for every image and currently I am trying to figure out, why most of the y variables are not rescaled correctly.

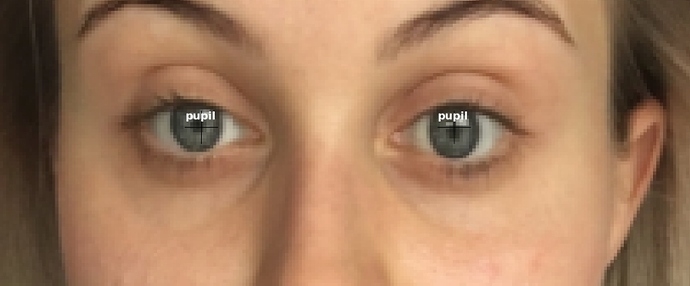

Below is an example of (almost) correctly rescaled output variables (the images have been cropped before uploading them here):

Source:

Rescaled:

Model

The model and agumentations are defined as follows:

f_model = resnet34

sz=224

bs=8

augs = [RandomFlip(tfm_y=TfmType.COORD),

RandomLighting(0.1,0.1, tfm_y=TfmType.COORD)]

tfms = tfms_from_model(f_model, sz, crop_type=CropType.NO, tfm_y=TfmType.COORD, aug_tfms=augs)

md = ImageClassifierData.from_csv(PATH, IMAGES, COORDS_CSV, tfms=tfms, continuous=True, bs=bs)

Variable checking

In order to check the dataset, I ran the following command:

x,y=next(iter(md.val_dl))

Upon checking the batch, I noticed that most of the outputs are zero:

y output:

tensor([[ 0., 0., 0., 0.],

[ 0., 0., 0., 0.],

[ 0., 0., 0., 0.],

[ 0., 0., 0., 0.],

[ 0., 0., 0., 0.],

[ 0., 0., 0., 0.],

[ 57., 69., 57., 146.],

[ 0., 0., 0., 0.]], device=‘cuda:0’)

I checked the image that has non-zero values (7th in the batch) and tried to figure out what might be the difference between this one that has correct output vaues and the rest.

So the source image has the following properties:

size (W x H) = 1086 x 919

annotated pupils (P1(y, x), P2(y, x)) = [(233, 333), (242, 712)]

the rescaled y values should (I guess) be calculated as follows:

pupil 1:

y = original_y/H224 = 233/919224 = 56.8 (correct)

x = original_x/W224 = 333/1086224 = 68.7 (correct)

pupil 2:

y = original_y/H224 = 242/919224 = 59 (don’t know why it is not the same as rescaled output (57))

x = original_x/W224 = 712/1086224 = 147 (not 146 as the output)

Considering these differences for 2nd output pair I am wondering if this calculation is correct or should be the source values reshaped in some different manner.

The first image from the batch, which has all zero output values has the following properties:

size (W x H) = 1339 x 1124

annotated pupils = [(251, 449), (247, 877)]

As the equations above don’t result zero I don’t know what is the main cause for wrong (unexpected) transformations.

As I have been struggling with this issue for days, I would really appreciate any help.

Best regards,

Niko