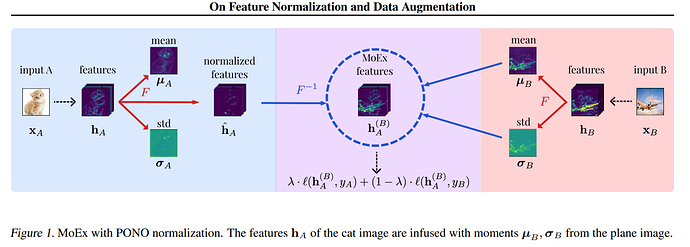

This paper just came out on Moment Exchange (MoEx) which is basically interpolating the mean/std dev of the images feature map and injecting it onto another image. (like an internal mixup somewhat).

They show in their testing that MoEx + Cutmix (an input augmentation) yields the best results beyond regular mixup, manifold mixup etc.

Their github code link isn’t working but will be interesting to test this out shortly and see if it replicates on ImageWoof etc.