So, we are trying to model a tabular data with 200 numerical features for a binary classification problem. The class imbalance is 1:9 so we are using ROC as the metric for training. The approach is to train 3 predictors ( LGBm, XGB, DL(fastai tabular learner)) and take the average of their probability predictions as the probability of the positive class. We train three models independently. We find that the individual AUC scores are (XGB: 0.898 LGB: 0.9 DL: 0.879) but the averaged model gives an AUC score of 0.7. This seems to be a bit strange.

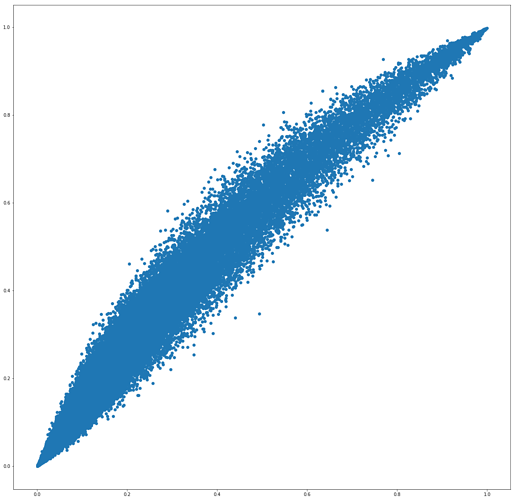

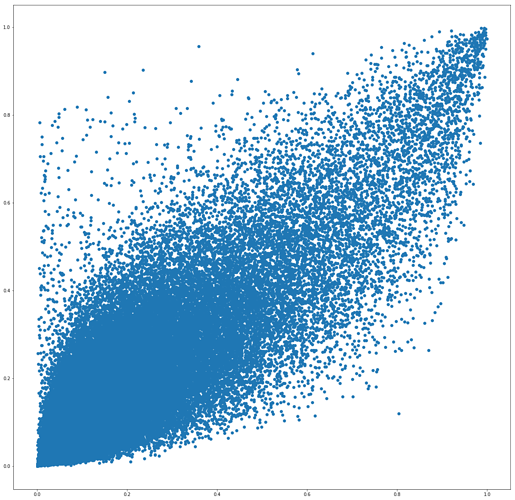

On taking scatter-plots of the predictions of the predictors, pair wise we observe:

The predictions of LGB and XGB are highly correlated:

But the scatter-plot of the dl-model with the tree based models seems to show very less co-relation.

My question is, is this the reason why model averaging is behaving worse than the individual predictors?

If the predictors are such, what would be a good way to combine their predictions?