I am doing an experiment to see if CycleGAN could learn spatial transform on single/ multiple objects. I got into a mode collapse issue and would like to seek for advice from dear fellows. Meanwhile, I would like to share with you what I have got.

MOTIVATIONS

- a number of literatures is criticizing the weakness of CNN to learn (object-based) spatial transform. I would like to check out if the claim is generally true.

- Many works done on CycleGAN is about transfer on texture / style. I would like to see if CycleGAN would also work for transfer on spatial pattern. And as one step further, to see if the model could learn to apply spatial transform locally on different objects.

DATASETS

I simulate 2 sets of data with MNIST for the experiment:

Dataset 1 – Easier Case

Domain A data is a small digit on bottom left, domain B is a big digit on bottom right.

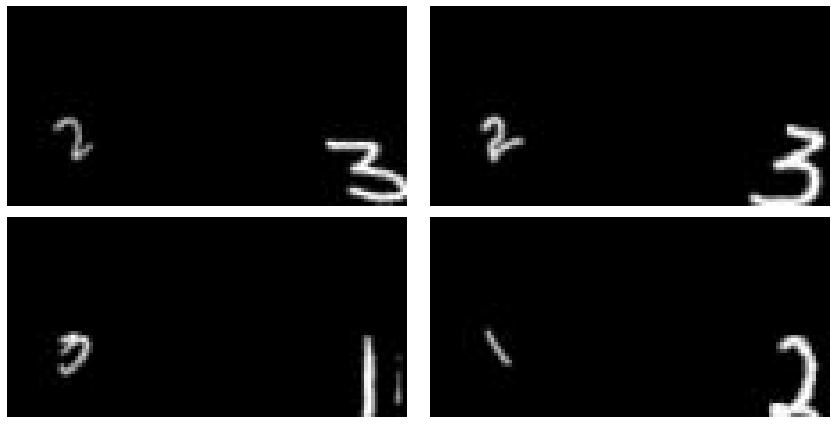

Samples are shown below. 4 blocks are presented. Each block has an image from domain A (on left) and an image from domain B (on right):

Dataset 2 – Harder Case

This is slightly harder than Dataset 1 because the image contains 2 digits. Domain A data are two smaller digits, while domain B data are two bigger digits. Similarly, digits in the same domain share the same spatial patterns. (e.g. digits in domain A are either in bottom left or top right).

Examples are shown below:

CURRENT RESULTS

To my surprise, CycleGAN managed to learn proper spatial transform on the digits! (I originally expect it to fail, and hence offer me an excuse to try other models =P)

As shown in the example below, digit on bottom left (indicated in left-most column) are properly translated to bottom right and it is properly enlarged (indicated in right-most column)!

Results from dataset 2 are shown below:

ISSUES

-

as you may notice from the above results, although the model could learn spatial transform, it fails to generate samples of variety. It is a well-known issue for GAN – mode collapse.

-

in addition, the transformed image failed to preserve the original digits. (e.g. if the image has a “4” and a “5”. Its transformed image should also have a “4” and a “5”)

Could anyone offer me a 2-cent on how I could get away from this mode collapse issue? As a reference, you could find my implementation here.

P.S.

My recent research interests revolve around generative model, image-to-image translation and scene composition / layout composition. If anyone share the same interests/ interested to learn more about this topics, feel free to discuss here!