A few days ago I was searching for some books for my gf and I stumbled upon someone’s a book [4b] on Amazon that had a confusing rating of 3/5, with only 9 5-star reviews and no other reviews and 1 single rating of 2-stars w/ no review. I started investigating finding that discrepancy to say the least strange, and contacted Amazon customer service. I received a reply that this is ML doing [3]. I started doing more digging as it made absolutely no sense that a company would pull absolutely fake negative rating out of the thin air. I tried to ask for help with this investigation at reddit [1], but it didn’t go too well, as instead of trying to investigate the abnormal behavior, most commentors tried to discredit my finding. I got a lot of useful input, learned a few things about Amazon’s inconsistent behavior which depends on whether you’re logged in or not. And then last night I thought of checking my own first book [4a] I published with O’Reilly some 18 years ago. Lo and behold, my book was abused by Amazon ML in exactly the same way.

I hope at least some of you here will try to follow the math and see that it doesn’t make sense.

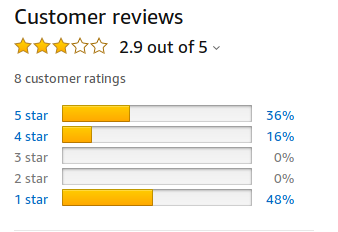

How do you take 3 5-star reviews, 4 4-star reviews, and 1 rating of 1-star w/ no review and turn it into a rating of 3/5? The real review of the book is ~4.5, yesterday it was pushed down to 3.1, and this morning it got pushed down to 2.9 - w/o any changes to the ratings. I know that this single 1-star rating is fake, because it was given a hugely disproportional weight. And the same was done in the case of the original book I was investigating, where a single 2-star fake rating was added with a disproportional weight pulling the book’s total 5-star review to 3 stars! If you are not a published author, you may not be aware that a rating of 3 for a book on amazon is a death sentence to that book.

It so baffles me that hundreds of people read that expose on reddit [1] and everybody thought it was OK, and some thought that I was crying wolf and worked hard at trying to prove that. What is the point of us discussing fairness in AI, if when an unfair behavior goes in production we all complacently just seat back and watch.

One commentor correctly identified that I had a false expectation of Amazon to give a fair treatment to all of its products it represents. It’s just a notice board where products are placed and of course, it doesn’t care which products are being sold and which are buried since it profits either way. As long as there are several products to choose from in each category and there is a need to buy, who cares about the fairness.

It’s also clear that Amazon is attempting to battle the myriads of fake reviews, and while I think it’s fantastic, it clearly doesn’t care that its ML model destroys on the way vendors and authors who didn’t lie, but can’t prove their book/product was really purchased elsewhere and the review is real. None of the reviews on my book are fake, i.e. I have not solicited any of those reviews. And those people bought my book at the O’Reilly conferences usually via Powell books and not Amazon, and yet those reviews are now clearly considered fake. (So if you ever sell something on Amazon, make sure your reviews are only of Verified Purchase “quality”.)

The main point I’m trying to drive across is that if you develop an ML engine and you have unverified ratings, how can you possibly think of an algorithm or a model of “balancing things out by adding fake negative ratings”? If you don’t trust the reviews/ratings, then remove them altogether. Say, this product has no verified reviews. Can you see how pulling a random number from a thin air and assigning it as rating to a product you know nothing about is just a terrible practice that makes no logical sense whatsoever? Unfortunately it appears that only a minority of products is affected, so there is no class action suit in works here.

If you do care to help me investigate the unfair representation of products on Amazon, here is the data I have so far:

-

The reddit discussion, unfortunately it didn’t lead to any constructive discussion, and 40% of redditors who read it so far (23 hours) downvoted the post.

-

Amazon’s customer service reply from which I learned it’s ML’s doing and not an algorithm:

The overall star rating for a product is determined by a machine-learned model that considers factors such as the age of the review, helpful votes by customers, and whether the reviews are from verified purchasers. Similar machine-learned factors help determine a review’s ranking in the list of reviews. The system continues to learn which reviews are most helpful to customers and improves the experience over time. Any changes that customers may currently experience in the review ranking or star ratings is expected as we continue to fine-tune our algorithms.

-

Several books that I discovered that were affected by this ML representation (notice you are likely to see different ratings depending on whether you’re logged in or not):

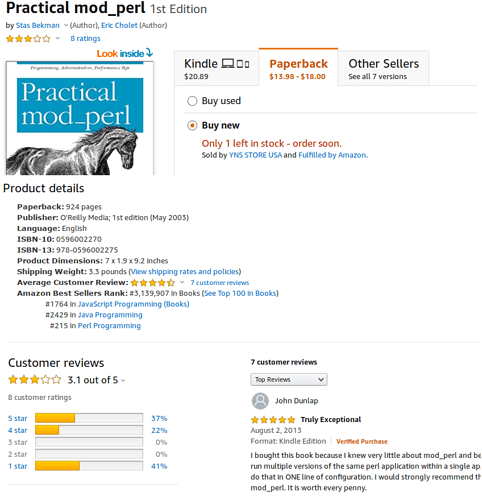

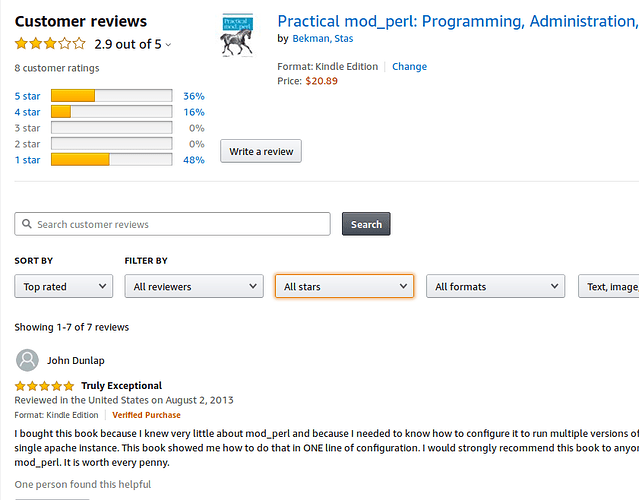

4a. My book. Here we have

(3*5+4*3 + 1*1)/8somehow adding up to 2.9 - note that that 1-star rating is not real and was added artificially with 48% weight against all the real reviews.Here is snapshot of my book yesterday:

here is a snapshot of my book this morning (dropped from 3.1 to 2.9 - notice the weight change)

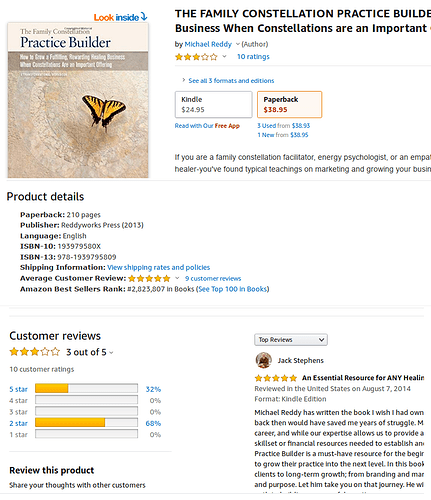

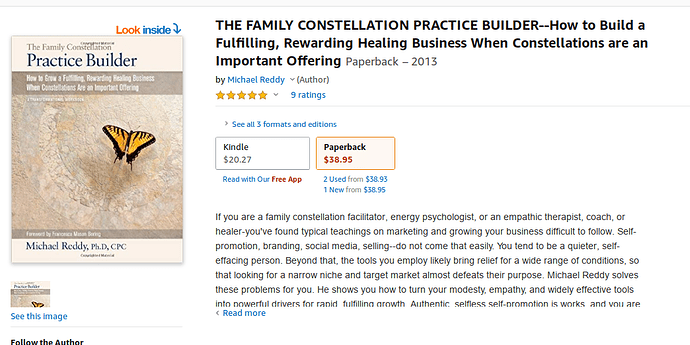

4b. Another book. Here we have

(9*5 + 1*2)/10somehow adding up to 3.0. Again, here we have a single fake 2-star rating with a weight of 68%!. Are you noticing the pattern?Here is a snapshot that other book: (I don’t know the author and haven’t read the book.)

Clearly, I need to find more examples, since otherwise nobody cares to pay attention. I am yet to find a quick way to do it. It seems to be an edge case, that affects only some older books with a few reviews and most/all without the Verified Purchase tag. It probably affects some non-book products too.

Edit: found a bunch of similar examples:

-

Lean Python - adds a fake 2-star review pulling a 1 not-verified 5-star review to 3.2, with weight 60% - only does that if logged in, shows 5-stars if not logged in

-

Python Machine Learning - 5/5 => 3/5. this one is odd as it may be includes a non-amazon.com review? but it didn’t do it on other books, so possilbly the same story. if you log out gives 5/5

-

Python Programming - logged in 3/5 with one fake 1-star review, logged out 5/5 - clearly internation reviews didn’t count here, since there is another 5-star review there.

-

Python 3: Pocket Primer - same story 3/5 logged in, 5/5 logged out

Your careful analysis is very welcome. I hope at least someone out there cares.

p.s. My book is 18 years old and it is a 900-page tome on a once very popular mod_perl Apache module, but that technology has been mostly forgotten nowadays, so I’m not expecting any sales from it, but it doesn’t change anything. Who gave Amazon the right to misrepresent my or anybody else’ book’s quality, ML or not?

Update:

It took a few days to solve the puzzle, I have done a massive update to the original article including all the discovered information and making it easier to read.

The bottom line is that Amazon is:

- Integrating international reviews into the ratings if you’re logged in

- Older reviews are given a significantly lower weight (can be like 1/10 or even less)

- Purchased product reviews get higher weights

#2 of this new approach, can have a tremendous negative impact on authors of outdated tech books. If you don’t want to get the rating of your older book to get continually lowered, take it off Amazon.

I’m in the process of figuring out on how to remove my books from Amazon.

You will find the full summary and recommendations for tech book authors on Amazon and Amazon marketplace designers in the article.

I don’t have any sources confirming my findings, this is just derived from looking at many many listings and trying to make the math work.

Thank you.