From my understanding of stateful RNN where batch size > 1, the keras documentation states

all batches have the same number of samples

If X1 and X2 are successive batches of samples, then X2[i] is the follow-up sequence to X1[i], for every i.

This essentially means that the data must be “interleaved” in batches as discussed here:

https://www.reddit.com/r/MachineLearning/comments/4k3i2n/keras_stateful_lstm_what_am_i_missing/

and also here: Does the stateful_lstm.py example make sense? · Issue #1820 · keras-team/keras · GitHub

My understanding the training data has to be re-constructed to look like this:

Sequence: a b c d e f g h i j k l m n o p q r s t u v w x y z 1 2 3 4 5 6

BATCH 0

sequence 0 of batch:

a

b

c

d

sequence 1 of batch:

q

r

s

t

BATCH 1

sequence 0 of batch:

e

f

g

h

sequence 1 of batch:

u

v

w

x

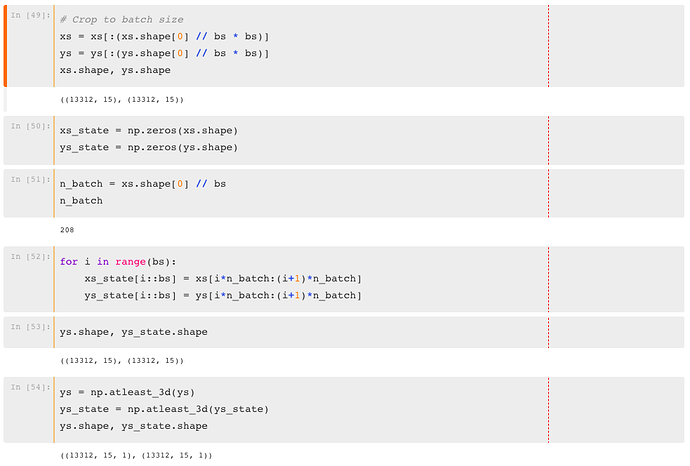

However, it doesn’t look like @jeremy is doing this in Lesson 6 in his notebook for the stateful LSTM (and the batch size = 64). Did Jeremy make a mistake, or do I have a mis-understanding?