Hello fast.ai community,

With all the awesome content taught in our course, I got increasingly interested in understanding the holistic view of DL research and progress.

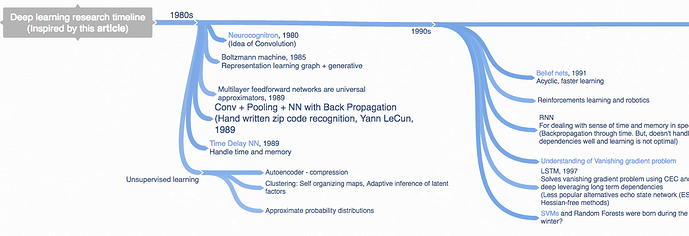

Click here for the complete mind map

In this mind map, I am focusing on three things:

- Algorithms and NN history: Timeline of important milestones which lead to current DL revolution. And state of the art pre-DL and what parts of it are still relevant.

- Timeline of hardware and associated software milestones.

- Releasing of data sets which significantly pushed the research and state of the art

All three pieces are color coded. I created this one a few weeks back but have been waiting to “complete” it (which in retrospect would never be completed as such  ) But realized it would be best to share the work in progress and take early feedback and possibly crowd-source the missing links

) But realized it would be best to share the work in progress and take early feedback and possibly crowd-source the missing links

Apart from this, I am also making another mind map for applications specific progress: CV, NLP, Speech

Please let me know if you think I have missed out on any major milestones. I am sure I have.

Thanks,