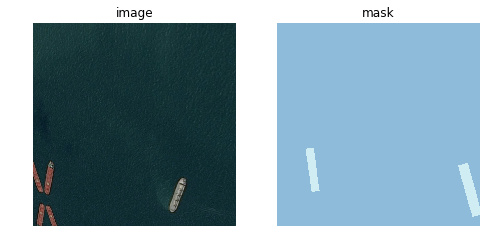

Somehow the masks are transformed differently than the respective images in my implementation. Everything without transforms works fine though.

data = (src.transform(vision.get_transforms(), size=IMG_SHAPE, tfm_y=True)

.databunch(bs=BATCH_SIZE)

.normalize(vision.imagenet_stats))

def imshow(idx):

fig, (ax1, ax2) = plt.subplots(1, 2, figsize = (8, 4))

data.train_ds[idx][0].show(ax=ax1)

ax1.set_title('image')

ax1.axis('off')

data.train_ds[idx][1].show(ax=ax2)

ax2.set_title('mask')

ax2.axis('off')

plt.show()

imshow(6)

Even basic flip with dihedral_affine in most cases results in different images. Is there something to consider? The only difference to other examples is the way I load masks, instead of using the default open_mask in SegmentationLabelList I use a custom function to load them from run-length encodings.

def masks_as_image(masks, shape):

# Decode ship masks into image segments and overlay them

mask_img = np.zeros(shape, dtype=np.uint8)

for mask in masks:

if isinstance(mask, str):

mask_img += vision.rle_decode(mask, shape).T.astype(np.uint8)

mask_tensor = basic_data.FloatTensor(mask_img)

mask_tensor = mask_tensor.view(shape[1], shape[0], -1)

return vision.ImageSegment(mask_tensor.permute(2,0,1))

def open_mask(fn):

img_id = fn.stem + fn.suffix

masks = masks_df[masks_df['ImageId'] == img_id]['EncodedPixels']

mask_img = masks_as_image(masks, IMG_SHAPE)

return mask_img

classes = ['no_ship', 'ship']

src = (SegmentationItemList.from_folder(path/'train_v2')

.random_split_by_pct(0.2)

.label_from_func(lambda x: x, classes=classes))

But it shouldn’t have any effect on later transformations since my mask tensors are in valid shape.