I successfully built a tabular learner model using the V1 library. But now that I have my model, I can’t figure out how to make predictions on holdout data not included in the train or validation sets. 0.7 had learn.predict(), but I can’t find that method in 1.0 and looked over all the documentation and source code with no luck. Can someone point me in the right direction for this in v1.0? Thanks!

@twood learn.get_preds(is_test=True) (to test on the test set)

learn.get_preds() will return the predictions and actuals of the validation set by default.

Not sure about for tabular in specific, but for vision, you can also use ye olde learn.TTA(is_test=True) which wraps get_preds with augmentation.

BTW v0 had the n_aug parameter for TTA which I found useful. On a case by case basis I found none, 4, 8, 16, even right up to 120 worked on different datasets. v1 doesn’t appear to have the parameter.

Oh, and at least in the vision application, the new ClassificationInterpretation.from_learner(learn) is great, being able to easily plot_top_losses() and plot_confusion_matrix() from the validation set makes understanding prediction slick.

For TTA we’re using 8 transforms more or less determined: crop in the 4 corners multiplied by the 2 flips (plus the other augment transforms applied on each of those 8). We found it yielded the best performance.

I guess you can adapt the code to write your own TTA

Thanks for replying everyone.

@sgugger looks like you made a related change to expose get_preds?

Now I’m having trouble loading a test dataframe in tabular_data_from_df. The test dataframe is the exact same format as the train and validation dataframes. tabular_data_from_df works fine when I do not load a test_df. I don’t think it is an issue with my test_df, because it gives the same error if I feed the train_df into the test_df arg. Any help is greatly appreciated.

This issue has been fixed. Update your fastai

@sgugger

So…after training simple binary classifier created from resnet50 and image_data_from_folder (everything else is default) I get as expected actual predictions size 2 and dummy label for each image from get_preds(is_test=True). Accuracy is calculated at 0.95.

But 2 predictions look like [-2.0396, 2.0478] though. I would expect their sum or sum of their exponents to be 1, which is clearly not the case here. So what am I missing?

Pytorch resnet50 puts the final activation function inside the loss function. So you’ll need to take the softmax to turn predictions into things that add to 1.

Can you please advise how to do inference on tabular learner, once model / learner is trained?

(Not on test set defined prior training but new data)

@thpeter71 that was the crux of my original question as well

For now there is no script for this, but you can apply your model to a new object by calling learn.model(x) (just make sure x is on a the same device as the model).

And make sure you’ve done learn.model.eval()

I tried learn.TTA(is_test=True) for vision, but so far this is not working for me (using image_data_from_csv data). As per this issue, it’s not yet implemented; does that sound right? Or is this just a simple case of me needing to implemented get_preds? The error message that I get is below.

---------------------------------------------------------------------------

NameError Traceback (most recent call last)

<ipython-input-22-b0d2dd2a8753> in <module>()

----> 1 learn.TTA(is_test=True)

/app/fastai/fastai/tta.py in _TTA(learn, beta, scale, is_test)

36 def _TTA(learn:Learner, beta:float=0.4, scale:float=1.35, is_test:bool=False) -> Tensors:

37 preds,y = learn.get_preds(is_test)

---> 38 all_preds = list(learn.tta_only(scale=scale, is_test=is_test))

39 avg_preds = torch.stack(all_preds).mean(0)

40 if beta is None: return preds,avg_preds,y

/app/fastai/fastai/tta.py in _tta_only(learn, is_test, scale)

29 if flip: tfm.append(flip_lr(p=1.))

30 ds.tfms = tfm

---> 31 yield get_preds(learn.model, dl, pbar=pbar)[0]

32 finally: ds.tfms = old

33

NameError: name 'get_preds' is not defined

No I think the problem was that someone whose name starts with ‘s’ and ends with ‘ugger’ forgot to export that function. I’ve done that now. We don’t actually have any tests of tta yet btw, so feel free to send a PR with one if you want to make sure that person doesn’t break this again… ![]()

Who could that person be?

Fantastic - just ran it again and it’s working! Thank you! Sorry you delayed your afternoon run for this

@sgugger, @jeremy, much appreciate your fast response!

Is this by design or just current status of development? (seems cumbersome to do inference this way as this should be the main usage of created models ‘in the wild’)

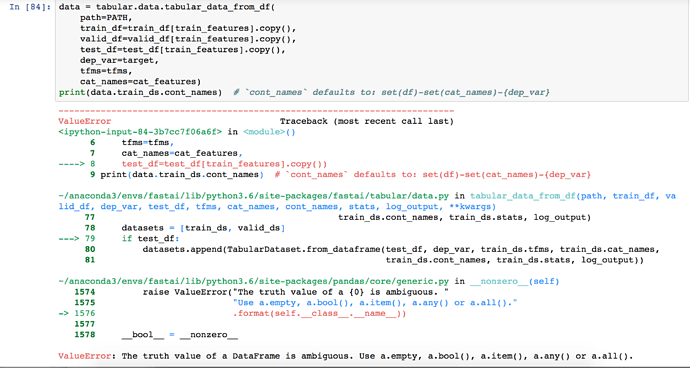

tried to follow sgugger’s advice and pass test_df to tabular_data_from_df as a workaround. Am getting a “ValueError: The truth value of a DataFrame is ambiguous.” in line 79 of data.py even though test_df is of exact same format as train_df and valid_df

As a general rule, we can’t help you if you don’t post the whole code and error message. I don’t see why that error would come up if you passed a tensor to the model.

For your other question, the library is still under development and the main focus until now has been on training models. We’ll had inference methods in the next weeks/months.

thanks again for fast reply. Indeed my mistake that I had updated using pip rather than git pull (as per stat’s reply).

will be patient and looking forward to the infer / predictions functions.

thanks for the great work on this library

@thpeter71 This error is due to the test condition in line 79, Instead of

if test_df is None:

it has

if test_df:

However, as pointed by @sgugger , this has been fixed in the latest version. You need to use the github version of fastai instead of what is available through pip.