Hi,

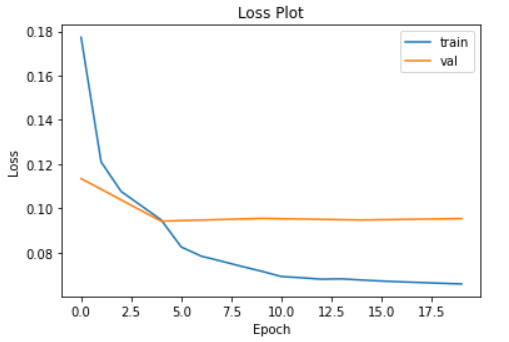

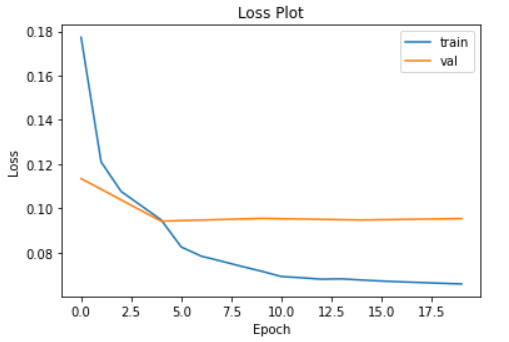

I am using pre-train resnet34, but the loss is not going down after 4 or 5 epochs, and I am training all the layers not just fine-tuning the network. Below is the loss graph:

I am using lr of 0.0001 with SGD optimizer. I have also tried Adam optimizer but that also does not help.

Any suggestions.

It looks like your training loss is going down, so the model is training, but not generalizing to the validation set. Consider adding some techniques to reduce overfitting, like regularization, dropout etc. Also check if your train/test set come from the same distribution, you might need more training data potentially.

Hi Darek,

I am using resnet32 (pre-trained), so I think I cannot make changes to the architecture except the last few layer. So how do I include regularization techniques?

Data is from the same distribution as I am randomly split the data in train-validation set.

Hi @karanchhabra99,

To avoid overfitting there are a few things you can do :

create_cnn_model(arch, n_out, cut=None, pretrained=True, n_in=3, init=kaiming_normal_, custom_head=None, concat_pool=True, lin_ftrs=None, ps=0.5, bn_final=False, lin_first=False, y_range=None)

Create custom convnet architecture using arch, n_in and n_out

The model is cut according to cut and it may be pretrained, in which case, the proper set of weights is downloaded then loaded. init is applied to the head of the model, which is either created by create_head (with lin_ftrs, ps, concat_pool, bn_final, lin_first and y_range) or is custom_head.

- finally if there is no way to get it to regularise with all this, you still have the option to use resnet18

Hope it helps !

Charles

2 Likes

Good ideas from Charles! Beyond that, it’s really hard to give specific advice without understanding your problem and data. Maybe you can do some error analysis to see what the model is learning and what it isn’t. I’d bet there are some clues in the data!

1 Like