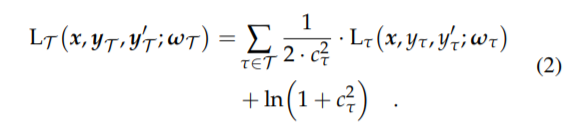

It’s been a long time since I worked on that. But I implemented this learnable weights method from the paper Auxiliary Tasks in Multi-task Learning. See section 2 for the formula.

Here is how I implemented that in my code on a toy project. loss_weights are coming from model in fastai (returned by the model to the loss). I append the loss for each of the tasks I cared about in my problem in losses. Then applied the formula. From what I remember it learned sensible weights between the various task losses, but it`s been a long time since I looked at that code.

losses = []

losses += [self.l2(result_output, target)]

losses += [self.l2(question_output, question_target[:, 2])]

losses = torch.stack(losses)

losses = ((1/(2*(loss_weights**2))) * losses) + torch.log(1 + loss_weights**2)

return losses.sum()