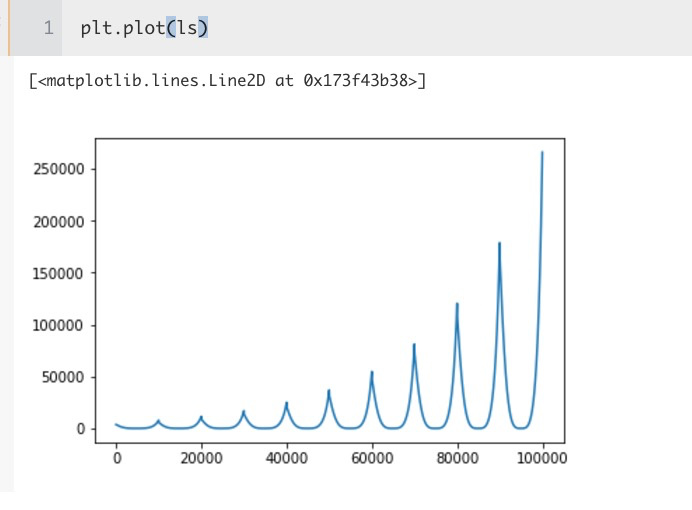

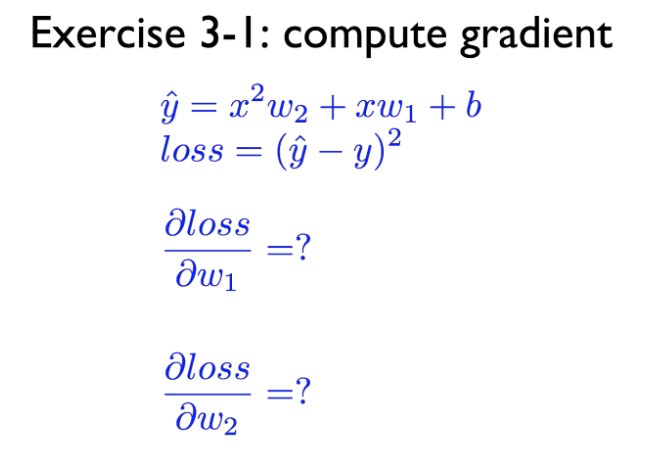

Hi, I’m playing with a single neuron to implement Exercise 3 in this slide, but I’m having some trouble with my loss fluctuating and eventually exploding… I’d very much appreciate your insights on what is causing this problem.

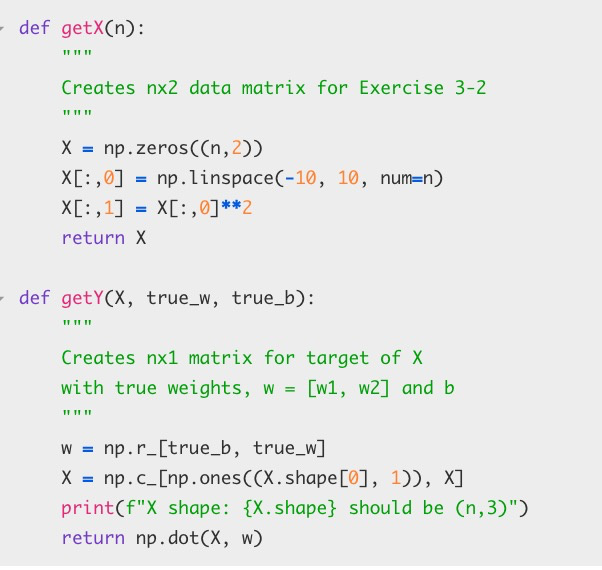

Here is my experiement. First, I create a training data:

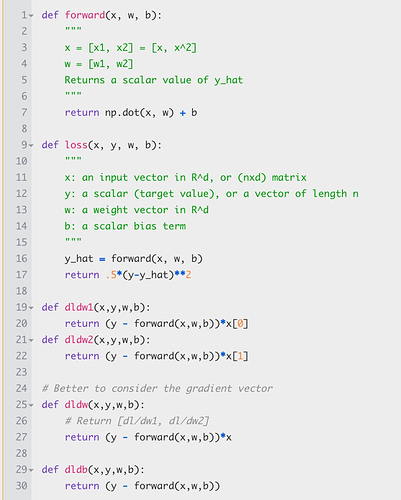

Here is my simple forward and gradient descent code:

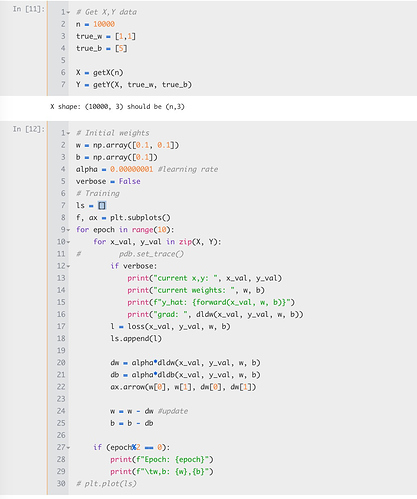

Then, I create my dataset and train the model:

The loss over epochs, however, is very wavy and the weights do not converge to the true values. I tried 1) increasing training data, 2) lowering the learning rate (0.0001-> 0.00005-> 0.000001-> even more) 3) increasing number of epochs. But none of them helped resolving the fluctuation.

Can somebody please help me understand what may cause this issue, and how I could alleviate it?