trusttheai

November 15, 2017, 3:49am

1

I trained a model and saved it using learn.save(“name”). When trying to validate the data, I face this issue https://github.com/fastai/fastai/issues/23 .

to see if it will go away if i clear the tmp folder (I have renamed all the files in tmp folder) and load the model. It fails with following error.

While copying the parameter named 0.weight, whose dimensions in the model are torch.Size([64, 3, 7, 7]) and whose dimensions in the checkpoint are torch.Size([1024]), ...

---------------------------------------------------------------------------

RuntimeError Traceback (most recent call last)

<ipython-input-25-f115c61cd3aa> in <module>()

1 learn = ConvLearner.pretrained(resnet34, data, precompute=False)

----> 2 learn.load("model_47.75acc.resnet34")

~/fast.ai/fastai/courses/dl1/fastai/learner.py in load(self, name)

61 def get_model_path(self, name): return os.path.join(self.models_path,name)+'.h5'

62 def save(self, name): save_model(self.model, self.get_model_path(name))

---> 63 def load(self, name): load_model(self.model, self.get_model_path(name))

64

65 def set_data(self, data): self.data_ = data

~/fast.ai/fastai/courses/dl1/fastai/torch_imports.py in load_model(m, p)

20 def children(m): return m if isinstance(m, (list, tuple)) else list(m.children())

21 def save_model(m, p): torch.save(m.state_dict(), p)

---> 22 def load_model(m, p): m.load_state_dict(torch.load(p))

23

24 def load_pre(pre, f, fn):

~/anaconda2/envs/fastai/lib/python3.6/site-packages/torch/nn/modules/module.py in load_state_dict(self, state_dict)

358 param = param.data

359 try:

--> 360 own_state[name].copy_(param)

361 except:

362 print('While copying the parameter named {}, whose dimensions in the model are'

RuntimeError: invalid argument 2: sizes do not match at /opt/conda/conda-bld/pytorch_1503970438496/work/torch/lib/THC/THCTensorCopy.cu:31

I think I am missing something major. any help will be greatly appreciated. Please let me know if more information is required.

Thanks

3 Likes

ramesh

November 15, 2017, 3:58am

2

Just trying to understand -

Can you confirm that save followed by load immediately is fine?

Do you get this error when training the model in GPU and Loading in the same GPU machine or using CPU?

jeremy

November 15, 2017, 4:27am

3

You may have saved with precompute True and loaded with False, or visa-versa.

16 Likes

trusttheai

November 15, 2017, 4:56am

5

You may have saved with precompute True and loaded with False, or visa-versa.

Looks like changing it to precompute=True seems to be progressing. will update once it completes

jeremy

November 15, 2017, 5:25am

6

FYI: Saving a model with precompute=True is unlikely to ever be what you want, since that stage completes so quickly.

2 Likes

trusttheai

November 16, 2017, 2:20am

7

FYI: Saving a model with precompute=True is unlikely to ever be what you want, since that stage completes so quickly.

@jeremy I did not understand this.

first thing we do is,

if we are to save the model, it will be with precompute=True, correct?

if my understanding is correct, we should save the models after we finetune the models with precompute=False.

Thanks

1 Like

jeremy

November 16, 2017, 3:31am

8

Yes, you could save it then, with precompute=True. But it takes <10 secs to train in that case, so there’s no point saving it!

3 Likes

neovaldivia

November 24, 2017, 2:20am

9

Related question, loading doesn’t work when you turn off/on an EC2. Is it so by design? How could I save my work for the following day if that is the case.

thank you!

steve0hh

February 16, 2018, 4:29pm

10

Just to check,

I restarted my kernel and loaded the model straight, however, I noticed that the prediction output were different from what I tested (before restarting the kernel).

May I know if this is normal.

ruhshan

March 21, 2018, 6:22am

11

How do I load pretrained model using fastai implementation over PyTorch? Like in SkLearn I can use pickle to dump a model in file then load and use later. I’ve use .load() method after declaring learn instance like bellow to load previously saved weights:

arch=resnet34

data = ImageClassifierData.from_paths(PATH, tfms=tfms_from_model(arch, sz))

learn = ConvLearner.pretrained(arch, data, precompute=False)

learn.load('resnet34_test')

Then to predict the class of an image:

trn_tfms, val_tfms = tfms_from_model(arch,100)

img = open_image('circle/14.png')

im = val_tfms(img)

preds = learn.predict_array(im[None])

print(np.argmax(preds))

But It gets me the error:

ValueError: Expected more than 1 value per channel when training, got input size [1, 1024]

This code works if I use learn.fit(0.01, 3) instead of learn.load(). What I really want is to avoid the training step In my application.

2 Likes

superives

March 21, 2018, 9:47pm

12

Hi Steve,

This happened to me too. The accuracy was lower after I reloaded the model.

Have you figured how the reason behind it?

1 Like

Kaneki

March 23, 2018, 9:33pm

13

Were you able to figure it out ?

Kaneki

March 23, 2018, 9:46pm

14

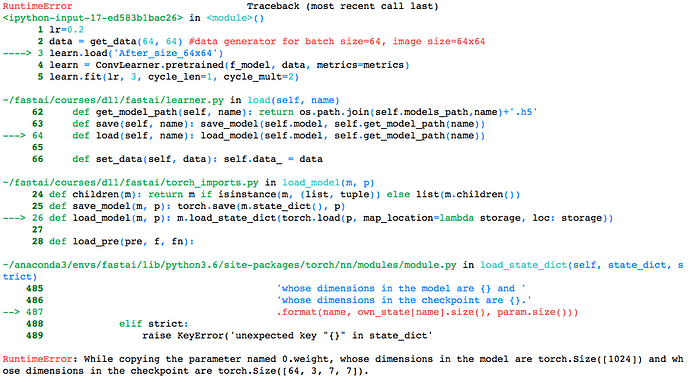

I am currently following this notebook for the Planet_Amazon kaggle challenge

How do I load my weights properly ?learn = ConvLearner.pretrained(f_model, data, metrics=metrics)

lrf = learn.lr_find()

learn.sched.plot()

lr=0.2

data = get_data(64, 64) #data generator for batch size=64, image size=64x64

learn = ConvLearner.pretrained(f_model, data, metrics=metrics)

learn.fit(lr, 3, cycle_len=1, cycle_mult=2)

learn.sched.plot_lr()

learn.load('After_size_64x64')

vikbehal

March 25, 2018, 8:37am

15

In actual you may just need this. Can you pls. try:

data = get_data(64, 64) #data generator for batch size=64, image size=64x64

You do not need to fit or find lr since weights are already found. In case you need to refine model further, then define lr as:

Pls. try and let me know.

1 Like

Kaneki

March 25, 2018, 12:36pm

16

Thank you for your reply. I tried your advice and it definitely works for 64x64 images but when I save my weights after completing all the training upto size 256x256 and save my model, I get the same error as above.

vikbehal

March 25, 2018, 12:39pm

17

Are you changing your data size like this?

data = get_data(256, 256) #data generator for batch size=64, image size=64x64

learn = ConvLearner.pretrained(f_model, data, metrics=metrics)

learn.load(‘After_size_250X256’)

Kaneki

March 25, 2018, 12:42pm

18

I used 256x64 as my get_data parameters. Also, when I set my data to this and tried to recreate the learn object, it starts to train on a few epochs and the kernel dies down

1 Like

Kaneki

March 25, 2018, 12:55pm

19

I finally solved the problem. I had precompute=True set when it shouldn’t have been. Thank you for the help !

1 Like

vikbehal

March 25, 2018, 12:56pm

20

Awesome!

You figured out it correctly, precmpute=True loads weights from cache. Since you’ll explicitly be loading best weights, you do not want to precompute.

2 Likes