Hi there,

I have a question as to the combination of convolutional layers and dropout.

In FastAI’s ConvLayer construct, we don’t get any Dropout layers, instead we get this:

> ConvLayer(3, 64)

ConvLayer(

(0): Conv2d(3, 64, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(1): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): ReLU()

)

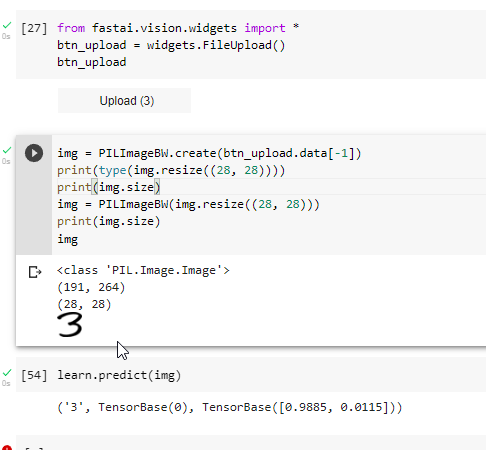

So a convolution, followed by batchnorm and relu. But in lecture 8 Jeremy discusses dropout in the context of Convolutions.

I did some searching on the web and the answer I got from ChatGPT summarized it pretty well in my opinion:

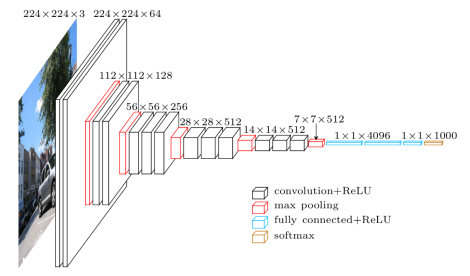

Convolutional blocks in deep learning models often consist of a sequence of convolutional layers, batch normalization, and activation functions. Dropout layers are also commonly used in deep learning models, but they are typically added after the convolutional blocks, rather than within the blocks.

The reason for this is that dropout is a regularization technique that helps to prevent overfitting by randomly dropping out some of the neurons during training. This can be particularly effective in fully connected layers, which can have a large number of parameters that may be prone to overfitting. However, convolutional layers typically have fewer parameters than fully connected layers, and the weight sharing in convolutional layers can also help to prevent overfitting.

Moreover, the use of batch normalization in convolutional blocks can also act as a regularizer by reducing internal covariate shift and improving the generalization of the network. Batch normalization reduces the effects of small changes in input distributions on the outputs of the layer, thus reducing the risk of overfitting.

Therefore, while dropout layers can be effective for regularization in deep learning models, they may not be necessary or as effective in convolutional blocks due to the properties of convolutional layers and the use of batch normalization. Nonetheless, adding a small amount of dropout after the convolutional blocks may still provide additional regularization benefits in some cases.

And another argument against dropout in convlayers: as far as I understand Resnet’s is also not incorporating dropout layers.

Are there other opinions on this? Or perhaps resources that discuss the usage of dropout for conv nets?