This is the topic for any non-beginner discussion around lesson 7. It won’t be actively monitored by Jeremy or I tonight, but we will answer standing questions in here tomorrow (if needed).

This is only loosely related to regularization, but I wonder if anybody has ever thought about implementing weight pruning procedures in fastai. Especially if you use L1 regularization, you can end up with quite a bit of near-zero weights.

Existing options (the tf2 optimization library, or the pytorch equivalent) stop short of the real goal: they allow to perform pruning, but then all the pruned parameters are just fixed to zero and not really removed from the model. So you don’t get any speed up by pruning this way (the GPU is just multiplying stuff by zero), except maybe you can cast some of the weights to integers but that’s tricky.

Instead, if one prune intelligently (for example, removing entire kernels in a CNN), the model could be greatly simplified by actually removing computation and leaving only or nearly-only the “winning lottery ticket”.

Is any of this being explored for fastai v2?

Yes this is very interesting and I would love to see some related work in fastai v2. It shouldn’t be too hard, since PyTorch already has some functionality.

I am not sure this is necessarily true though. Often, we will result in a sparse network, which GPUs struggle with. So compute time and resources may be higher. I think this is still a field of active research.

Yeah, the resource you link is exactly what I was referring to.

If you prune intelligently, you CAN remove computation. It’s true that putting to zero weights here and there is gonna result in sparse operations that are very difficult to optimize. Howerver, for example in a CNN, if you remove an entire filter or even an entire kernel in a layer deep in the network and the relative feature map, as well as all forward connections depending on that, then this is a net gain: the GPU will not need to compute those convolutions nor any of the other operations depending on that feature map moving forward in the network

Ah yes you are right! I didn’t properly read your post I guess. In that case, yes there would be speedups. I would love to see what is possible in that realm.

I have also read there are apparently also some potential hardware developments for dealing with sparse networks but that may take a few years to come to fruition.

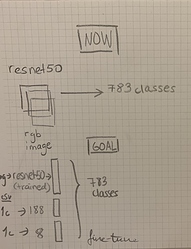

I am trying to build a classifier combining image data and tabular data. So far, I have a trained a resnet50 on rgb images to predict one of 783 classes.

In addition to the image dataset, I also have some tabular data which I would like to use. Namely, the country and zones (~continent) in which the images were taken. There are 188 countries and 8 zones. How could I add this information to the fully connected layer of resnet50 and fine-tune the model?

I can draw the process in my head but I struggle to code it in fastai2. Which should be my next steps?

Now that we have covered image and tabular I believe I should have all the ingredients to achieve it

- Create a resnet backbone with

create_body→conv_nn - Create a simple NN for your tabular data with

TabularModel→tab_nn - Create a head with

create_headthat takes as input the (number of out features of youconv_nn) + (num of out features of yourtab_nn) →head

** You can get the number of features ofconv_nnby usingnum_features_model(conv_nn) - Create a

Modulethat receives both images and tab data in the forward method:

def forward(self, imgs, tab):

x1 = self.conv_nn(imgs)

x2 = self.tab_nn(tab)

x = torch.cat((x1,x2))

return self.head(x)

- Create a Dataloader that returns (Image, TabData, Target)

This is the hardest step, since fastai image dataloaders and tabular dataloaders work a bit differently. It would be great to have something likeDataBlock((ImageBlock,<TabularBlock>,CategoryBlock)), but I’m not really sure what you would put in<TabularBlock>. I suggested you try to start with the low levelDatasetsAPI.

That is the girst of it, I hope that at least the networks explanation is clear. The dataloaders are very tricky indeed

THANKS A LOT Lucas! This is such an awesome reply  I will give it a try over the weekend!

I will give it a try over the weekend!

After watching lesson 7, I decided to watch fast.ai ML for coders and Jeremy recommends using %prun to see which lines of code take the longest to run. He also uses x = np.array(trn, dtype=np.float32) to speed up trying different versions of RF. I tried %prun with multiple n_jobs and it didn’t give a peek inside the individual process, so I tried it with 1 thread. 1 thread %prun yields {method ‘build’ of ‘sklearn.tree._tree.DepthFirstTreeBuilder’ objects} takes the longest to run. I checked fastai’s class Tabular for any mention of turning x into floats, and not sure if it does it automatically or if it is no longer relevant.