You can but you will need to make some work on your pretrained model.

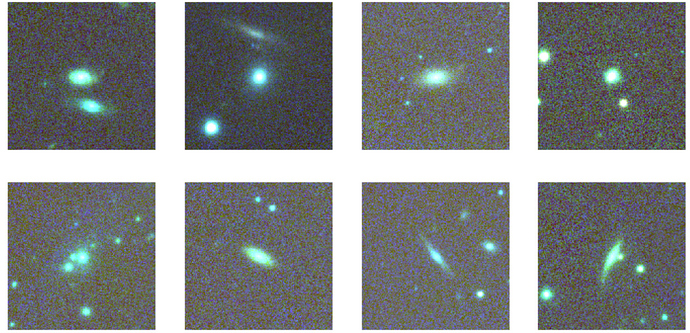

I believe there are some satellite/astronomy images that have channels for UV and IR

Not with fastai  The data augmentation will be properly applied to your coordinates.

The data augmentation will be properly applied to your coordinates.

Yes, just have your get_y return an array of points and not just one point.

I guess if Jeremy clarifies this it might not create any confusion for people seeing the mooc or watching the video later? Or maybe it can be added somewhere else

The notebooks are already updated, that’s all what matters

This is literally what I’m working on as we speak!

Lots of amazing work done by others in hyperspectral imaging, e.g., https://gist.github.com/jaeeolma/0846e03c0c3b613212f8ca5824ae47e0 (made by @mayrajeo)!

EDIT to include Sylvain’s prophecy:

So fastai supports using say imagenet weights on input images that have more than 3 channels? How does that work? It uses the weights for the kernels for those 3 channels and initialize randomly the rest?

If the training images are normalised std dev etc, then on (production) predict we would also need to apply the same normalise to the image we want a prediction from? .

Is there a tutorial showing how to use pretrained models on 4 channel image? Also, how can you add a channel to a normal image?

Yes, that is why learn.export keeps track of the transforms you applied during training to use them again at inference.

Does PointBlock and show_results support point regression for multiple points, like if we want to detect more facial features?

biwi = DataBlock(

blocks=(ImageBlock, PointBlock),

get_items=get_image_files,

get_y=get_ctr,

splitter=FuncSplitter(lambda o: o.parent.name=='13'),

batch_tfms=[*aug_transforms(size=(240,320)),

Normalize.from_stats(*imagenet_stats)]

)

size=(240,320) we are using rectangular images here. How does this fit with a model pre-trained on squared images (ImageNet)?

Yes, as said before.

one cycle fit i think uses pytorchs onecycle scheduler ,with momentum also getting varried in same way.

is it always suggested to varry the momentum also for a cycle or it can be kept constant also some times ?

Can this technique of using an additional channel for images be used to train against video data?

What I mean is, can I still use a imagenet pre-trained model and adapt it to video data?

Nothing in the model forces images to be squares (we will look at the actual architecture later). So you can use it with any size / aspect ratio.

looks like head pose dataset is not downloadable anymore? It moved here though apparently https://icu.ee.ethz.ch/research/datsets.html

No, it uses fastai schedulers. PyTorch only very recently added onecycle training.

untar_data uses the fastai URLs which point to a fastai server. So you should still have it there.